Your cart is currently empty.

Watch

Course PreviewThe growing need for data-driven decision-making in competitive businesses has resulted in a notable increase in the demand for skilled Data Analysts. This course covers an in-depth understanding of data analysis using SQL, Python, statistical techniques, Advanced Microsoft Excel, and data visualization.

This advanced program for Data Analytics certification entails around multiple case studies, quizzes, and industry-focused projects.

Online Live Classroom Sessions:

You will gain a deeper understanding of the Data Analytics domain by completing the capstone projects and multiple electives.

Pursuing a career as a Data Analyst offers competitive pay and the opportunity to work in data-driven industries.

According to the latest data from IBM, there will be 49.2 million job opportunities in data analytics by 2030. The data shows a positive 15% annual growth from 2020 to 2030.

Data Analyst professionals make an average of about ₹7,00,000 per annum in India; with more experience, that amount can increase to ₹70,00,000 per annum.

According to Glassdoor, the average income of a Data Analyst in the United States is about US$62,453 per year. With more experience, this may increase to US$95,000 per year.

| Role | Definition | Technologies Used |

| Data Scientist | Responsible for predictive analytics, machine learning operations, and statistical modeling to generate insights and build complex models from large datasets. | Python, R, TensorFlow, PyTorch, Hadoop, Spark, SQL, NoSQL, Jupyter |

| Data Analyst | Analyzing and interpreting datasets to generate reports and dashboards, assisting in identifying trends and monitoring KPI metrics. | Excel, SQL, Tableau, Power BI, Python, R, Google Analytics |

| Business Analyst | Develop solutions for business needs and data solutions. Also responsible for optimizing processes and making informed decisions with strategic insights. | Excel, Power BI, Tableau, Jira, Confluence, SQL |

Here are some compelling reasons to look for:

A data analyst collects, processes, and analyzes data to help companies make accurate decisions. Their essential tasks include data collection, cleaning, analysis, visualization, and reporting.

Talk To Us

We are happy to help you 24/7

55% Average Salary Hike

$1,22,000 Highest Salary

40 LPA Highest Salary

10000+ Career Transitions

300+ Hiring Partners

Career Transition Handbook

*Past record is no guarantee of future job prospects

Data Analysts perform the entire life-cycle of data analysis, create reports and dashboards, identify trends, and monitor KPI metrics.

A data scientist is responsible for performing statistical analysis to build machine learning systems statistical modeling to visualize insights and develop complex models from large datasets.

A data analytics specialist collects, organizes, interprets, and summarizes numerical data to provide usable information.

Create data visualizations, such as reports, graphs, presentations, and dashboards, that are eye-catching, interactive, and easy to understand.

Identify, develop, and execute business analysis techniques to allow the team to make essential business decisions.

Produce detailed reports and dashboards of high quality to give intuitive presentations.

Proficient in SQL

Knowledge in Data Wrangling Techniques

Analyzing Complex Datasets

Predictive Models and Algorithms

Skilled in Data Visualization Techniques

Time Series Analysis and Forecasting

Strong Foundation in Machine Learning

Data Modeling and Reporting in Power BI

Expertise in Advanced Statistics

Data Mining Techniques

Statistical Computing

EMI Starts at

₹5,000

We partnered with financing companies to provide very competitive finance options at 0% interest rate

Financing Partners

Contact Us

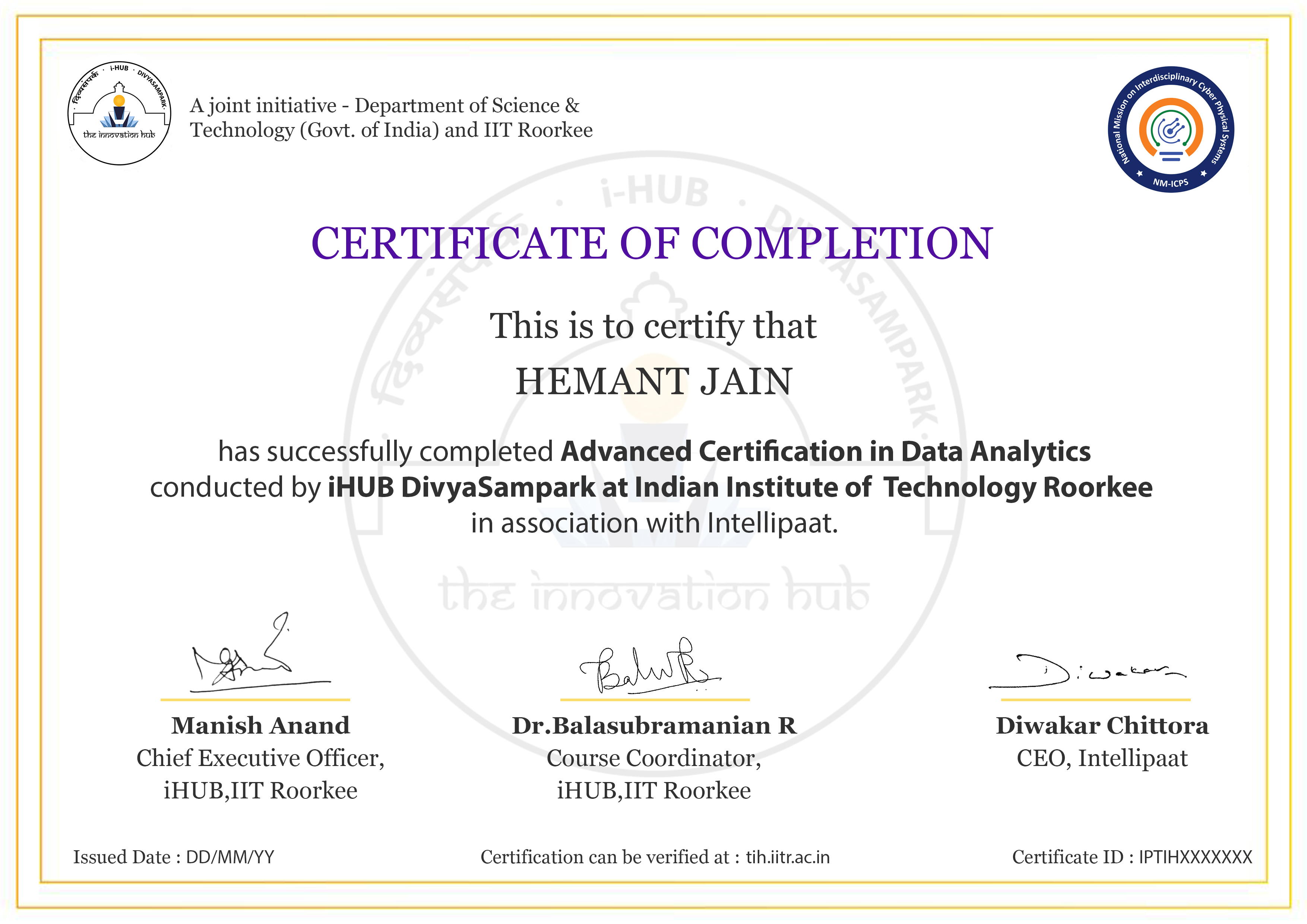

Get your Data Analytics Certification from iHUB IIT Roorkee. Learn Python, SQL, statistics, and Power BI while exploring advanced AI and ChatGPT modules from our industry experts and IIT professors. Benefit from IIT faculty-led training, real-world projects, and placement assistance through our 3100+ hiring partners.

Along with that, you will also be able to pass the following certifications:

Experience Campus Immersion at iHub IIT Roorkee & Build Formidable Networks With Peers & IIT Faculty

Land Your Dream Job Like Our Alumni

iHUB DivyaSampark at IIT Roorkee, recognized under the National Mission on Interdisciplinary Cyber-Physical Systems (NM-ICPS) by the Department of Science and Technology (DST), with emphasis on promoting innovation in more advanced technologies such as AI, ML, and more. The hub is pivotal in technology development, incubation, and startups, particularly in sectors like healthcare, Industry 4.0, smart cities, and defence.

To meet market demands and promote a self-reliant India, iHUB DivyaSampark is working with industry and government to advance CPS by integrating smart devices with next-generation technologies.

Our Data Analytics Certification Course is in association with iHUB IIT Roorkee. It is industry-designed and developed for you to fast-track your career in Data Analytics. If you don’t want to get into the nitty-gritty of programming and spend long hours coding, then this program is for you.

This online Data Analyst Course involves the following:

Intelllipaat offers the best data analysis courses and other big data courses such as Hadoop, data science, machine learning, Python Certification Training, Python for data science, and business analytics certification.

If you are looking for free resources on Data Analytics, check out our YouTube channel for free videos and our blogs on data analytics tutorials and interview questions.

Data Analysis is the process of data cleaning, transformation, and reporting that produces essential information which can help in better decision-making.

Here are some of the top Data Analytics tools/programming languages:

A career path in Data Analytics is a promising choice and one of today’s most renowned careers. After completing this training, you can find jobs in this field at various global companies and industries.

The Bureau of Labor Statistics predicts that the demand for Data Analytics professionals will increase by 23% by 2026.

To become a Data Analyst, you must have the following things:

A bachelor’s degree is required to work as a Data Analyst. The degree need not be from a specific discipline; however, a degree in computer science, probability, or mathematics can be beneficial; however, it is not compulsory. Focus on developing skills first, then qualifications.

For those who prefer to learn at their own pace, i.e., through recorded videos, Intellipaat provides self-paced training. You will also receive benefits from this training, such as lifetime access to the LMS for learning modules and email query resolution. Also, you will get the updated version of the Course material at no additional cost.

To be eligible for the placement pool, the learner must complete the Data Analyst training and submit all projects and assignments. After this, they must pass the Placement Readiness Test (PRT) to enter the placement pool and access our job portal along with career mentoring sessions.

Intellipaat helps fresh college graduates with its placement assistance programs. Once you clear the PRT, you will be moved to the placement pool.

Intellipaat’s Data Analyst course, in collaboration with IIT Roorkee, aims to equip learners with a comprehensive understanding of statistical techniques, extraction, data analysis and Big Data Analytics. Advanced Microsoft Excel techniques like VBA script for automation will be coverted. It also focuses on mastering data visualization tools like Power BI, for organizing data effectively, designing insightful dashboards, and acquiring practical skills through real-world case studies and projects to enhance career prospects in data analytics.

The overall time duration of this course is 7 months.

Click to Zoom

Click to Zoom