Your cart is currently empty.

Learning Format

Online Bootcamp

Live Classes

7 Months

Career Services

by Intellipaat

MITxMicroMasters

Certification

EMI Starts

at ₹8,000/month*

Our Data Engineering course will provide you with in-depth knowledge in SQL, Python, data pipelines, data transformation, Spark, and cloud services of AWS and Azure. Multiple Data Engineering courses and real-world projects help you master core concepts & skills like creating production-ready ETL and pulling data from multiple data sources, building cloud data warehouses, Data Modeling, etc.

About MIT and MIT IDSS

The Institute for Data, Systems, and Society (IDSS) is a cross-disciplinary unit made up of faculty from across the Massachusetts Institute of Technology (MIT). IDSS advances education and research in data analysis, statistics, and machine learning, and applies these tools in collaboration with social scientists, community, and policymakers to address complex societal challenges.

On the completion of this data engineering course certification, you will:

To know more about the MIT IDSS, click here

57% Average Salary Hike

$1,28,000 Highest Salary

12000+ Career Transitions

300+ Hiring Partners

Career Transition Handbook

*Past record is no guarantee of future job prospects

They design and build complex data pipelines and have expert knowledge in coding using Python, etc. These professionals collaborate and work closely with data scientists to run the code using various tools such as the Hadoop ecosystem, etc.

They are typically the database administrators and are responsible for data management. These professionals have in-depth knowledge of databases, and they also help in business operations.

They are skilled in data warehousing and create dimension models for loading data for large-scale enterprise reporting solutions. These professionals are experts in using ELT tools and SQL.

They are responsible for looking after the ETL processes, performance administration, dimensional design, etc. These professionals take care of the full back-end development and dimensional design of the table structure.

They design and define the overall structure of a system to improve the business of an organization. The job role of these professionals involves breaking large projects into manageable pieces.

15+ Skills to Master

SQL

Data Warehousing

OLAP

OLTP

ETL

Python Programming

Spark

Spark Streaming

AWS

EMR

Apache Airflow

S3

S3 Glacier

Docker

Kubernetes

4+ Tools to Master

Projects in data engineering will be a part of your certification to consolidate your learning and ensure that you have real-world industry experience.

Data Engineering Course Admission Details

The application process consists of three simple steps. An offer of admission will be made to selected candidates based on the feedback from the interview panel. The selected candidates will be notified over email and phone, and they can block their seats through the payment of the admission fee.

Submit Application

Tell us a bit about yourself and why you want to join this program

Application Review

An admission panel will shortlist candidates based on their application

Admission

Selected candidates will be notified within 3 days

Total Admission Fee

EMI Starts at

We partnered with financing companies to provide very competitive finance options at 0% interest rate

Financing Partners

![]()

Admissions close once the required number of students is enrolled for the upcoming cohort. Apply early to secure your seat.

| Date | Time | Batch Type | |

|---|---|---|---|

| Program Induction | 6th July 2025 | 08:00 PM - 11:00 PM IST | Weekend (Sat-Sun) |

| Regular Classes | 6th July 2025 | 08:00 PM - 11:00 PM IST | Weekend (Sat-Sun) |

Data engineering can be called a branch of data science that involves preparing the data to be analyzed by data scientists and data analysts. This online data engineer course training course includes practically applying data collection techniques and maintaining the organization’s data pipeline systems.

Intellipaat’s online Data Engineering courses will validate your skills in the domain and will add value to your resume. The real-life practical applications will help you develop a strong skill set that you can showcase to recruiters. Get the best Data Engineer course certification and excel in your data engineering career.

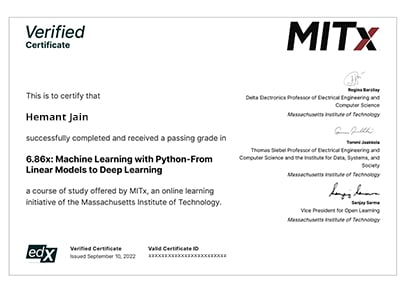

On the completion of the online data engineering online course, and the completion of the various projects and assignments in this program, you will receive your data engineering certification.

According to Glassdoor, the average annual salary of a certified data engineer in India is ₹850,000.

It is recommended that you have a basic level of knowledge in programming or any object-oriented coding language to better understand the Data Engineering concepts.

The top companies hiring data engineers around the globe are as follows:

Yes, you can easily join the data engineering courses even if you do not have technical experience or are not from a technical background. However, knowing any object-oriented programming language will be helpful.

The data engineering courses come with a duration of six months of live classes and lifelong access to course material. In this tenure, it is suggested that you devote six to seven hours a week to master the data engineering concepts taught in the online classes.

Intellipaat provides career guidance services such as interview preparatory sessions, industry mentorship, and more for all learners enrolled in the Data Engineering courses. MIT is not responsible for placements and career services.

You can expect a salary range of Rs.7-8 lakhs from the job offer. However, it depends on how you perform in your interview. We have seen our learners achieving up to 30 LPA in salary packages.

Yes, Intellipaat certification is highly recognized in the industry. Our alumni work in more than 10,000 corporations and startups, which is a testament that our programs are industry-aligned and well-recognized. Additionally, the Intellipaat program is in partnership with the National Skill Development Corporation (NSDC), which further validates its credibility. Learners will get an NSDC certificate along with Intellipaat certificate for the programs they enroll in.

What is included in this course?

Click to Zoom

Click to Zoom