Machine Learning in Data Science

Data Science has undergone a paradigm shift as a result of the powerful and disruptive technology known as machine learning. Machine learning has pushed the limits of data analysis and interpretation by allowing computers to learn from data independently and make wise decisions without the need for explicit programming.

In this blog, we will explore the fundamentals of Machine Learning in Data Science, its applications, algorithms, and its impact on various sectors.

Check out this Youtube video to learn more on Machine Learning Algorithms in Data Science.

Machine learning is used to predict, categorize, classify, finding polarity, etc from the given datasets and concerned with minimizing the error.

Machine learning is used to predict, categorize, classify, and find polarity from the given datasets and is concerned with minimizing the error. Machine learning comprises of many algorithms like SVM Algorithm in Python, Bayes algorithm, logistic regression, etc. These algorithms can be used to train data to match with input data and then to provide a conclusion with maximum accuracy.

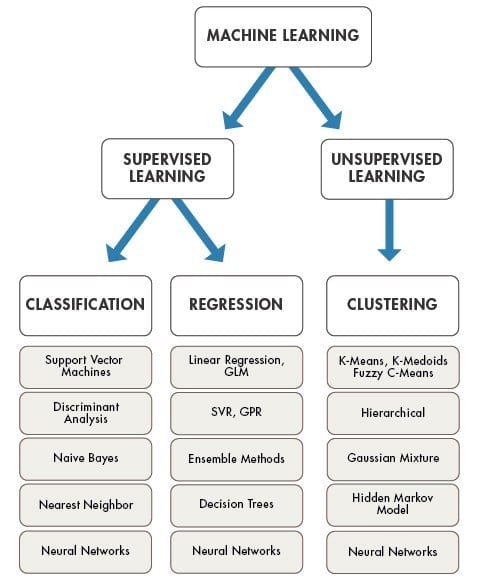

Machine learning is categorized into

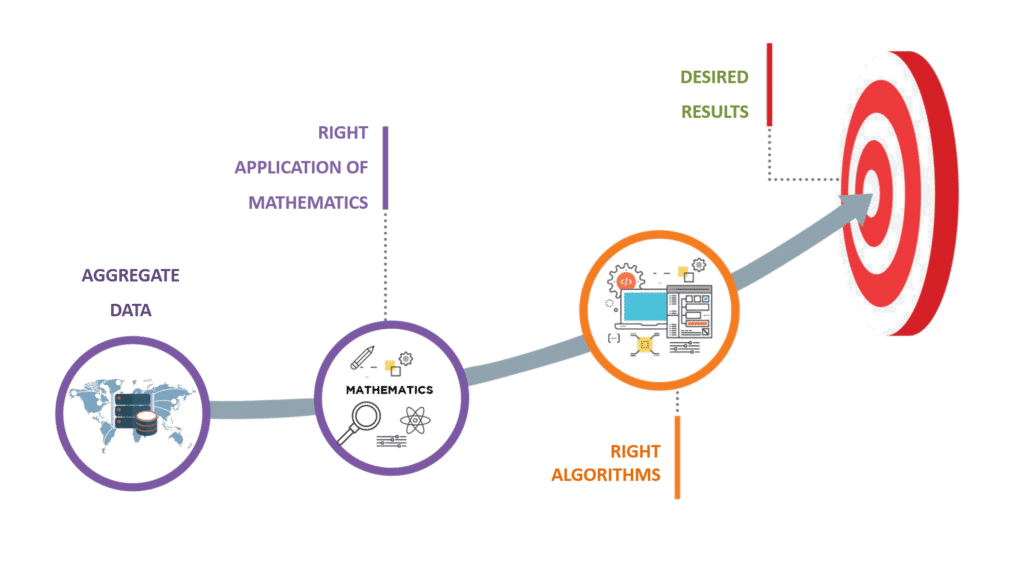

The critical element of data science is Machine Learning algorithms, which are a process of a set of rules to solve a certain problem.

Some of the important data science algorithms include regression, classification, clustering techniques, decision trees, random forests, and machine learning techniques like supervised, unsupervised, and reinforcement learning. In addition to these, there are many algorithms that organizations develop to serve their unique needs.

It is used for the structured dataset. It analyzes the training data and generates a function that will be used for other datasets.

Get 100% Hike!

Master Most in Demand Skills Now!

It is used for raw datasets. Its main task is to convert raw data to structured data. In today’s world, there is a huge amount of raw data in every field. Even the computer generates log files which are in the form of raw data. Therefore it’s the most important part of machine learning.

Reinforcement Learning (RL) is a distinct field within machine learning that concentrates on instructing agents to make a sequence of decisions in an environment, all in pursuit of optimizing the total accumulated rewards. The primary goal of RL is to empower an agent to engage with its surroundings, carefully observe the consequences resulting from its actions, and subsequently modify its behavior based on those observations.

In the domain of reinforcement learning, the acquisition of knowledge occurs through a sequence of trial and error iterations. The agent actively explores the environment by taking actions, and based on the ensuing rewards or penalties, it leverages this information to refine its policy or strategy. The ultimate objective is to uncover an optimal policy that yields the highest possible long-term cumulative rewards.

An integral concept in reinforcement learning is the Markov Decision Process (MDP), which establishes a mathematical framework for modeling problems involving sequential decision-making. The MDP encompasses several key elements, including states, actions, transition probabilities, rewards, and a discount factor that governs the significance of future rewards. Collectively, these components define the dynamics of the decision-making process within the framework of RL.

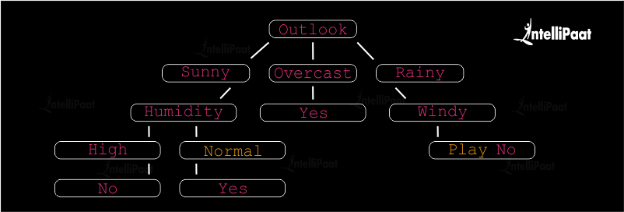

The decision tree is a widely utilized supervised machine learning algorithm that finds applications in tasks such as classification and regression. It adopts a tree-like structure, wherein internal nodes symbolize features or attributes, branches represent decision rules based on those attributes, and leaf nodes correspond to outcomes or predictions.

Below is an illustration of a decision tree that demonstrates the classification of whether a person is likely to play tennis, utilizing weather conditions as the determining factors:

In this example, the decision tree starts with the “Outlook” attribute, which represents different weather conditions. The tree branches out based on the possible values of the Outlook attribute: “Sunny,” “Overcast,” and “Rainy.”

If the Outlook is “Sunny,” the tree considers the next attribute, which is “Humidity.” If the Humidity is “High,” the tree predicts “No” for playing tennis. If the Humidity is “Normal,” the tree predicts “Yes” for playing tennis.

If the Outlook is “Overcast,” which is a favorable weather condition, the tree directly predicts “Yes” for playing tennis.

If the Outlook is “Rainy,” the tree considers the next attribute, which is “Windy.” If it is “Windy,” the tree predicts “No” for playing tennis. If it is not “Windy,” the tree predicts “Yes” for playing tennis.

This decision tree can be used to predict whether a person will play tennis based on the given weather conditions. By following the branches of the tree based on the values of attributes, we can reach the predicted outcome at the leaf nodes.

Watch this Data Science Course video to learn more about its concepts:

We will be using three algorithms in this course

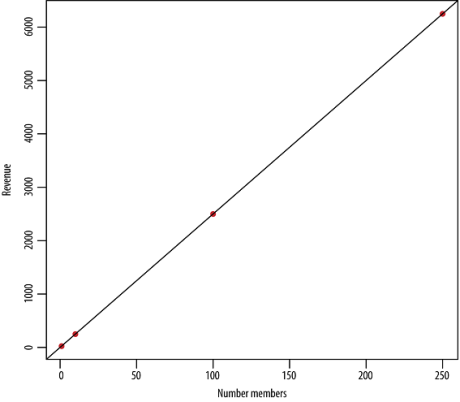

It is the most well-known and popular algorithm in machine learning and statistics. This model will assume a linear relationship between the input and the output variable. It is represented in the form of a linear equation which has a set of inputs and a predictive output. Then it will estimate the values of the coefficient used in the representation.

-

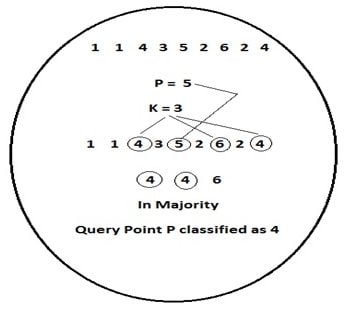

k-Nearest Neighbors (k-NN)

This algorithm is used for classification problems and statistical problems as well.

Its model is to store the complete dataset. By using this algorithm, prediction is done by searching the entire training data for k instances. We can use the Euclidean distance formula to determine similar input from k training data. Prediction depends on mean and median while solving for a regression problem. This algorithm is mainly used for classification problems.

A Machine Learning Course will give you a better understanding of the problem.

The output will be calculated from a class that has the highest frequency when solving for classification.

-

K-means and K-median Clustering

K-means and K-median clustering are widely employed algorithms in unsupervised machine learning for the purpose of clustering analysis. These iterative algorithms strive to partition a given dataset into separate groups or clusters based on similarity or distance metrics.

K-means clustering, which is a centroid-based algorithm, aims to minimize the sum of squares within each cluster. It works by repeatedly assigning data points to the nearest centroid and recalculating the centroids until convergence. The number of clusters, denoted as K, needs to be specified in advance. K-means clustering is highly efficient and widely adopted due to its simplicity. However, it assumes that clusters are spherical, equally sized, and possess similar variances.

On the other hand, K-median clustering is a variant of K-means that minimizes the sum of absolute distances within each cluster. Instead of using the mean as the centroid, it employs the median. K-median clustering is robust to outliers and is more suitable when the data distribution is non-Gaussian or when outliers are present. However, it involves higher computational complexity compared to K-means.

Both K-means and K-median clustering have numerous applications across various domains. They can be effectively used for tasks such as customer segmentation, image segmentation, anomaly detection, recommendation systems, and more. These algorithms provide valuable insights into the underlying structure of the data, facilitating the identification of patterns and similarities within the dataset.