CTA

According to Globenewswire, DevOps is set to grow at a CAGR of 19.2% till 2031. With the increase in market size, the number of jobs also increases. According to foundit.com, there are 34000+ jobs available right now in India. According to LinkedIn, in the US, there are more than 44000+ jobs available. If you’re planning to appear for your next DevOps Interview, this guide on DevOps Interview Questions is going to be extremely helpful for you!

Table of content

Most Frequently Asked DevOps Interview Questions

1. What is DevOps?

2. How do you define the role of a DevOps engineer?

3. How does DevOps differ from traditional software development methodologies?

4. How does HTTP/HTTPS work?

5. What is CI and CD? What is its purpose?

DevOps Basic Interview Questions

1. What is DevOps?

Devops is often confused with a tool or a technology. However it is none of these, Devops is a set of practices that help development and operations teams to work together and increase collaboration. The name itself suggests Developer + Operations i.e DevOps.

To implement the said set of practices in DevOps, one uses a suite of tools such as Docker, Kubernetes, git, ansible etc.

2. How do you define the role of a DevOps engineer?

A DevOps engineer is responsible for increasing collaboration between the development team and the IT operations team using a set of principles or tools. Through these principles, they implement the following things:

- Automate Processes by creating CICD pipelines

- Manage Infrastructure on cloud or on-premise

- Monitor Performance of deployed applications

- Ensure Security

- Improve Collaboration

3. How does DevOps differ from traditional software development methodologies?

| Traditional Development |

DevOps |

| Software is shipped once all the features are developed, making the development time longer. |

Software features are divided into various phases, and after each phase software is released. For eg. v1.0, v1.1, v1.2 etc. |

| No automation between teams for collaboration. |

Automation is present, for example, configuration management, automated test cases, containerization etc. This significantly decreases the dependency between teams and makes deployment times faster. |

| Development and Operations team works in silos. |

Increased collaboration between teams, promoting the culture of shared responsibility |

| Scaling happens once the software is live, since it’s not planned or automated, leads to problems. |

Scaling is automated using tools for Infrastructure as a Code(eg. terraform), making scaling extremely easy. |

Get 100% Hike!

Master Most in Demand Skills Now!

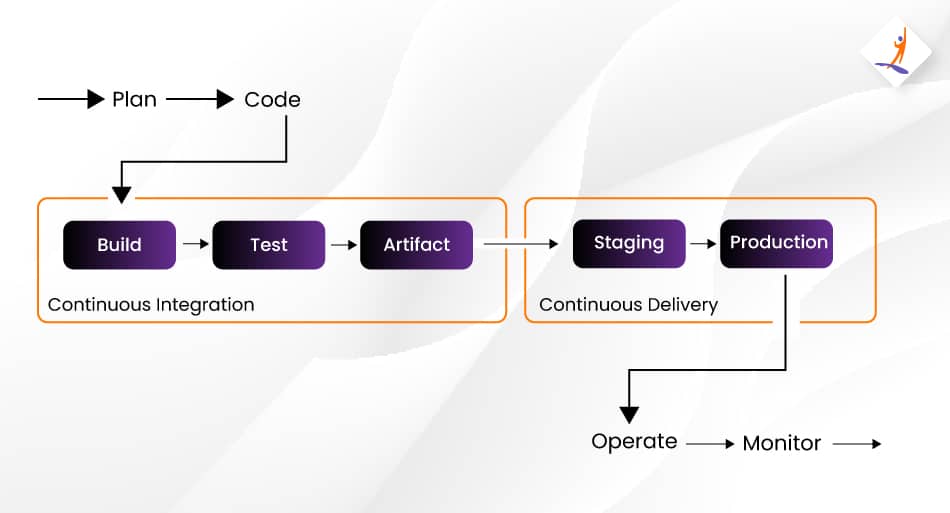

4. What are CI/CD Pipelines?

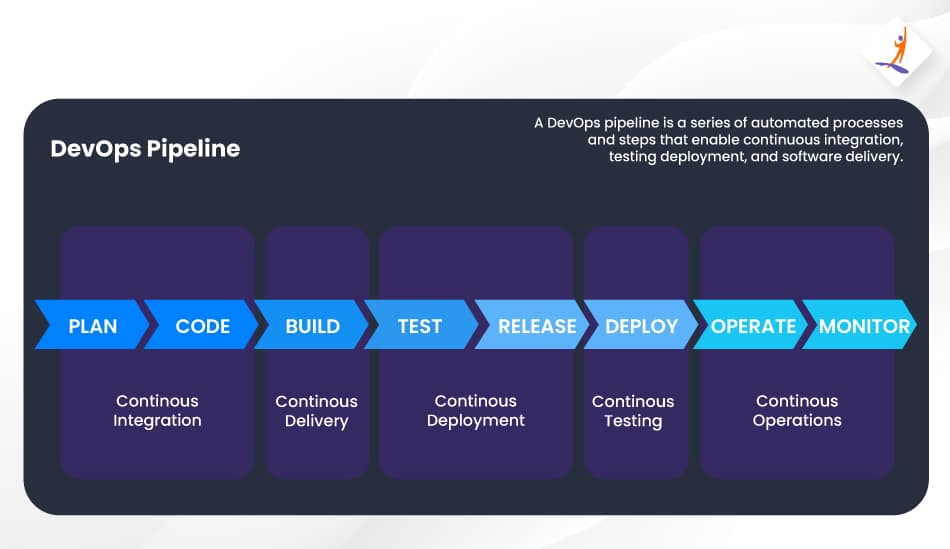

CI/CD Pipelines are Continuous Integration and Continuous Deployment Pipelines, which help in deploying software once it is submitted to the version control system. It deploys the software by going following various automated stages which not only makes the deployment of software fast, but also bug-free!

Here are the different stages involved in a CICD Pipeline:

5. What are the different phases in DevOps?

Plan: Requirements are gathered from the stakeholders, and the first set of features are decided, which will be rolled out in the first release.

Code: Requirements are broken down into code tasks, and assigned to developers. Developers, once done with the code upload it on Github.

Build: In this stage, the code is built into an executable

Test: The executable is then moved to a test server and tested against automated test cases.

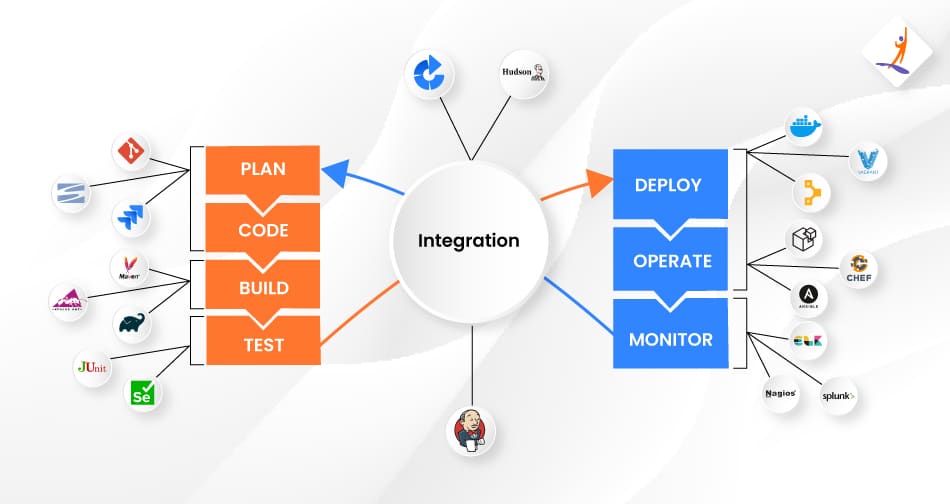

Integration: The integration is done via CI/CD pipelines created by tools like Jenkins. Their job is to move the artifacts between one stage to another. For example, from code to build, from build to test, etc.

Deploy & Operate: This stage combines configuration management, containerisation and deployment to production. All these tasks are automated.

Monitor: Finally once the tools are deployed, they are monitored for any anomalies continuously by monitoring tools. Any feedback received from monitoring tools, is then forwarded to the Plan stage again.

DevOps Interview Questions For Freshers

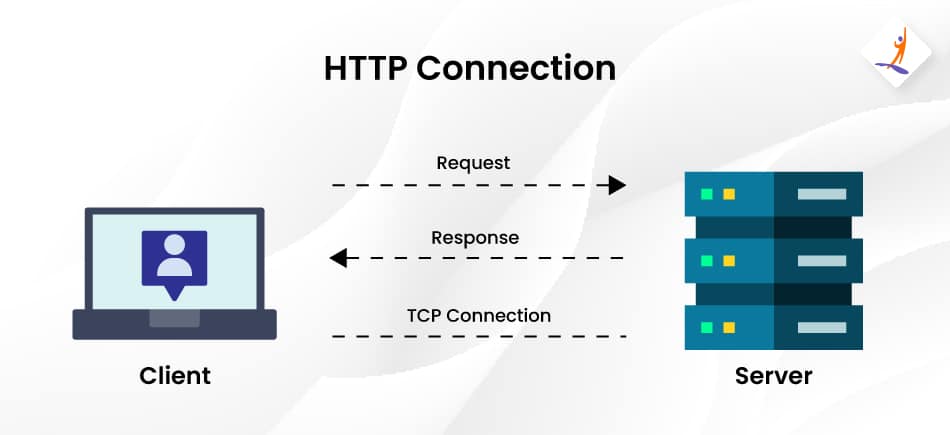

6. How does HTTP/HTTPS work?

HTTP or Hypertext Transfer Protocol, works in a client–server model like most other protocols. HTTP provides a way to interact with web resources by transmitting hypertext messages between clients (browsers) and servers.

HTTPS is similar to HTTP, however, HTTPS is a secured version of HTTP. It encrypts the data between your machine and the server.

7. What is CI and CD? What is its purpose?

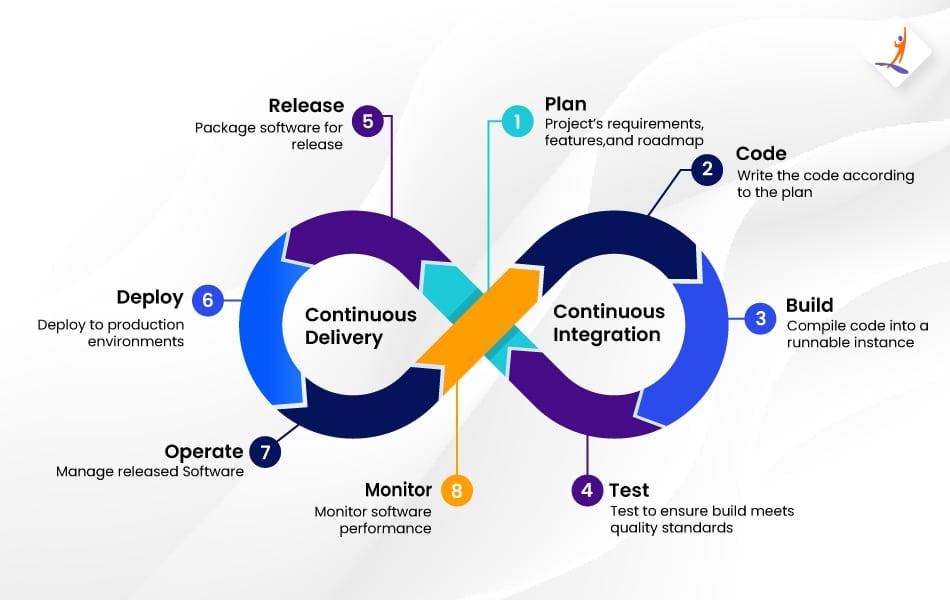

DevOps Pipeline comprises two stages: Continuous Integration and Continuous Deployment.

Continuous Integration comprises 3 parts: Build, Test, and Artifact versioning.

In Continuous Integration, developers integrate their code into a single repository, which is then thoroughly tested, and after passing all the tests, it is built into an artifact. The artifact is again built and automatically tested, and if it passes the tests, it is uploaded to an Artifact repository like Nexus or Artifactory.

From here, continuous deployment starts, its job is to deploy the latest artifacts to a staging repository and finally to a production server.

Are you ready to take a challenge to answer the utmost asked interview questions?

Take a quick Quiz to check it out

8. How do you measure the success of DevOps implementation?

To measure the success of a DevOps implementation following indicators can help:

- Deployment Frequency

- Time taken from code commit to deployment on production

- Change failure rate

- Mean Time to Recovery

- Availability and Uptime

- Automation Levels

- Code Quality Metrics

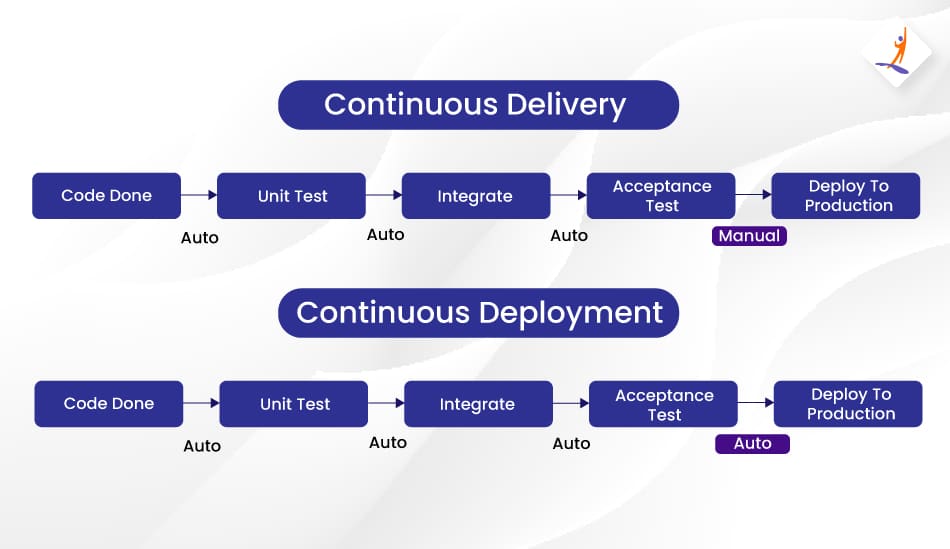

9. What is continuous delivery?

Continuous delivery is a software development practice that automates the release process. Its primary objective is to ensure that software is consistently prepared for deployment to production. This means that code changes are frequently integrated, built, tested, and deployed in a repeatable and automated manner. It helps the teams to reduce the time and effort required to release software while maintaining high quality and reliability. With continuous delivery, teams can release software more frequently and confidently.

10. What is the difference between continuous deployment and continuous delivery?

Continuous deployment is a software development practice where code changes are automatically deployed to production as soon as they pass the necessary tests and checks. Continuous delivery, on the other hand, focuses on automating the software release process but allows for manual intervention to determine when and how code changes are deployed to production.

While continuous deployment is fully automated, continuous delivery provides the flexibility to choose when and how to release code changes, even though the process is automated.

11. What is infrastructure as code?

Infrastructure as code (IaC) is a practice where infrastructure is defined and managed using code. Instead of manually configuring servers and infrastructure components, IaC allows teams to automate the provisioning, configuration, and deployment of infrastructure using code.

This approach helps teams achieve consistency, reduce errors, and increase speed and efficiency. IaC also enables teams to version control their infrastructure code, making it easier to track changes and collaborate.

12. Is DevOps a tool?

DevOps is not a tool or a technology, it is a set of practices aimed at breaking down silos between the Development and Operations team.

13. What is a DevOps pipeline?

DevOps is a sequence of steps through which an application starting from the code stage is transformed into a full-fledged application on the production server. The code goes through various stages to get deployed on the production server.

14. How would you ensure your DevOps pipeline is scalable and can handle increased demand?

Here are a few things that you can do:

- Leverage Cloud Infrastructure

- Adopt Microservices Architecture

- Containerization and Orchestration

- Parallelise CI/CD Pipelines

- Cache dependencies and builds

- Apply Infrastructure as Code

Interview Questions For DevOps Engineer

15. How will you convert a traditional project into a DevOps Project?

Here is a step-by-step guide on how to do this:

- Assess the state of the current project: Identify the dependencies between the processes, identify processes which do not need tweaking. Identify the dependencies which can be a bottleneck for the entire project, if they fail. Finally establish the Key Performance Indicators (KPIs) which you want to achieve once the Devops transition is complete.

- Make the team ready for the transition: The aim of implementing Devops is increased collaboration, using agile methodologies and implement knowledge sharing practices. Therefore, the team should be ready to embrace this mindset.

- Setup Version Control: This ideally should be there for your current project, if its not present, start with this.

- Automate Continuous Integration (CI): Integrate CI tools like Jenkins, implement automated testing, and implement software builds with every commit

- Implement Continuous Delivery and Continuous Deployment: Automate deployments, add rollback capabilities, also add approval gates for deployment.

- Integrate Infrastructure as Code (IaC) and Configuration Management: Automate your infrastructure via code using tools like Terraform, CloudFormation etc. Also start working on Configuration Management using tools like Ansible, Puppet etc.

- Implement Monitoring and Logging: The most important stage in a devops pipeline is monitoring, setup infrastructure monitoring and application monitoring using tools like Prometheus and Graffana.

16. What are the core operations of DevOps in terms of development and infrastructure?

The core operations of DevOps are application development, version control, unit testing, deployment with infrastructure, monitoring, configuration, and orchestration.

17. How do you integrate security testing into your DevOps pipeline?

It is essential to use security tools that can automatically scan the code and infrastructure for vulnerabilities to integrate security testing into a DevOps pipeline. This can include devices such as static analysis security testing and dynamic application security testing.

These tools can be integrated into the build process to test for security issues and provide feedback to developers automatically. Additionally, it is essential to involve security experts early in the development process to identify potential security risks and ensure that security is integrated into every pipeline stage.

18. What role does automation play in ensuring security in a DevOps environment?

Automation plays a critical role in ensuring security in a DevOps environment. Automating security checks and testing makes detecting and fixing potential vulnerabilities and threats easier. Automation can also help ensure the consistent application of security policies across the entire pipeline, reducing the risk of human error.

Additionally, automation can enable continuous monitoring and threat detection, allowing for a rapid response to any possible security incidents. Overall, automation helps to create a more secure and resilient DevOps environment.

DevOps Interview Questions For Experienced (2 To 5 Years)

19. What is the importance of continuous feedback in DevOps?

Continuous feedback is essential in DevOps as it helps teams identify and address issues early, improving the quality and speed of software delivery. By collecting feedback throughout the software development process, teams can continuously monitor the performance of their applications and infrastructure and make improvements as needed.

It also helps to ensure that the software meets the needs of its users, resulting in better customer satisfaction and higher business value. Continuous feedback is a key element of the DevOps culture and promotes a mindset of continuous learning and improvement.

20. How do you ensure that both development and operations teams adopt DevOps practices?

You can practice the following to ensure development and operations teams adopt DevOps practices:

- Establish clear goals and objectives for the DevOps initiative

- Encourage collaboration and communication between teams

- Provide training and resources to help teams learn and adopt DevOps practices

- Use metrics and KPIs to measure progress and identify areas for improvement

- Foster a culture of continuous learning and improvement

- Promote ownership and accountability among team members

- Celebrate successes and share lessons learnt to build momentum and support for DevOps practices

21. How do you ensure your infrastructure is secure in a DevOps environment?

To ensure that infrastructure is secure in a DevOps environment, you can take the following steps:

- Implement DevOps best security practices in the development process

- Use automated security testing tools to identify vulnerabilities

- Monitor infrastructure continuously to detect and respond to security threats promptly

- Employ role-based access control to restrict access to sensitive information

- Regularly update and patch systems and software to address known vulnerabilities

- Establish incident responses and disaster recovery plans to minimize the impact of security incidents

22. Can you describe the role of security in a DevOps environment?

Security is a key element integrated into the entire software development lifecycle in a DevOps context. Security ensures the software is created to comply with rules and defend against any security risks.

DevOps teams should include professionals who are knowledgeable about the most current security standards, can spot security threats, and can put the best security practices into action. This guarantees that the program is secure from creation and constantly watched throughout the deployment and maintenance phases.

23. How do you ensure your DevOps tools and technologies are up-to-date and secure?

- The success of every DevOps project depends on maintaining the most recent and secure versions of DevOps tools and technology. Regularly checking for updates and security patches for all the tools is crucial to ensuring this. You can accomplish this by joining the vendor’s email lists or following their social media accounts. It is also advised to employ security testing tools like vulnerability scanners and penetration testing programs to find and fix any security problems.

24. What are the key security considerations when building a DevOps pipeline?

Security should be considered throughout the development lifecycle when building a DevOps pipeline. Some key security considerations include ensuring that code is written securely, using secure and up-to-date software dependencies, and implementing security testing and vulnerability scanning tools.

Access control and authentication measures should also be implemented, and security incidents should be quickly identified and addressed.

25. Can you describe the process for securing application containers in a DevOps environment?

In a DevOps environment, securing application containers is critical to preventing cyber threats. The process typically starts with selecting a secure container registry and implementing a continuous integration and continuous deployment (CI/CD) pipeline, including vulnerability scanning and image signing.

Once the container images are deployed, access control and network segmentation are used to limit their exposure to potential threats. Regular security scanning, patching, monitoring, and logging are essential to maintaining container security.

26. How does DevOps help in reducing the time-to-market for software products?

DevOps combines software development and IT operations to deliver software products quickly and efficiently. DevOps reduces time-to-market by promoting automation, collaboration, Agile development, and infrastructure as code. Automation tools reduce manual testing and deployment, and collaboration helps identify and resolve issues quickly. Agile development allows for rapid feedback and infrastructure as code automates infrastructure management.

27. What is the difference between a container and a virtual machine?

Virtual machines (VMs) and containers are two different approaches to running software. Applications can execute in a consistent environment across several systems due to containers, which are portable, lightweight environments that share the host system’s resources.

A virtual machine (VM) is an operating system that operates entirely independently on top of a host machine while utilizing its resources, such as memory and CPU.

28. How does automation help in DevOps?

Automation plays a crucial role in DevOps by streamlining software development. It eliminates repetitive and time-consuming tasks like testing and deployment, leading to faster time-to-market, enhanced software quality, and reduced chances of human error. Automation tools are instrumental in achieving these benefits by automating various aspects of the software development process.

Automation also increases efficiency, scalability, and reliability, enabling teams to focus on innovation and value creation.

29. Can you discuss the importance of identity and access management in a DevOps environment?

Identity and access management is critical in a DevOps environment to ensure that only authorized personnel can access the systems and resources. It helps manage users’ identities, access to resources, and permissions.

Identity and access management ensures that users have access to the resources required for their roles and responsibilities. It also helps detect and prevent unauthorized access and ensures access requests are verified and authenticated.

30. How do you approach testing in a DevOps environment?

In a DevOps environment, testing is integrated into the software development process. Automated testing tools ensure that code changes are thoroughly tested before deployment and that feedback is continuously provided to developers.

Testing is performed continuously throughout the software development lifecycle, allowing for early detection of issues and faster delivery of high-quality software.

31. Can you tell me what continuous testing and automation testing are?

Automation testing, as the name suggests, is a process of automating the manual testing process. It involves using separate testing tools that let developers create test scripts that can be executed repeatedly without manual intervention.

Continuous testing is the process of executing automated tests as part of the software delivery pipeline in DevOps. Each build is tested continuously in this process, allowing the development team to get fast business feedback to prevent the problems from progressing to the next stage of the software delivery lifecycle. This will dramatically speed up a developer’s workflow. They no longer need to manually rebuild the project and re-run all tests after making changes.

32. What are the benefits of automation testing?

Automation testing has several advantages, including quicker and more effective testing, expanded coverage, and higher test accuracy. It can save time and money in the long run because automated testing can be repeated without human intervention.

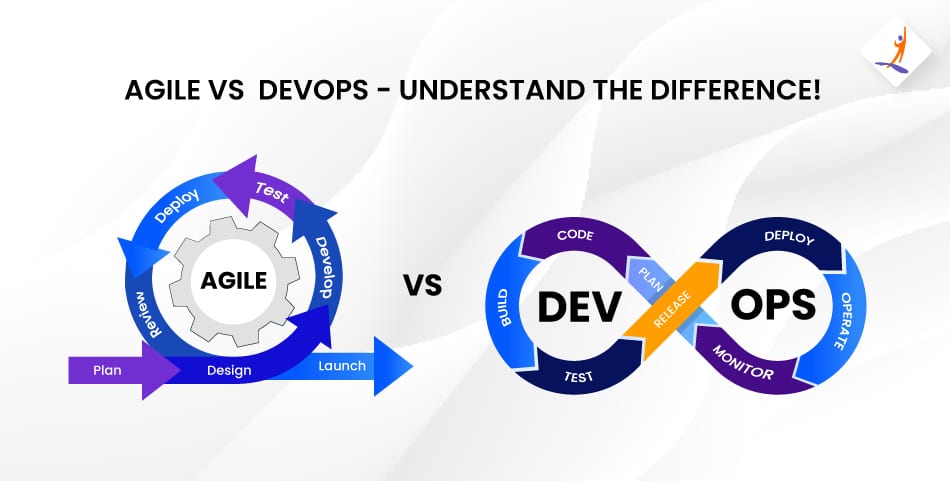

33. Can one consider DevOps as an Agile methodology?

DevOps can be considered complementary to the Agile methodology but not entirely similar.

34. What is the role of continuous testing in DevOps?

Continuous testing is a critical component of DevOps that involves testing early and often throughout the software development process. It provides continuous feedback to developers, ensuring that code changes are tested thoroughly and defects are detected early. Continuous testing helps improve software quality, reduce costs and time-to-market, and increase customer satisfaction.

35. What is the role of cloud computing in DevOps?

Cloud computing plays a vital role within the realm of DevOps, as it offers a versatile and scalable infrastructure for software development and deployment. Its provision of computing resources on demand, which are easily manageable and provisional, is instrumental in empowering DevOps teams. By leveraging cloud services, these teams are able to automate the deployment process, collaborate effectively, and seamlessly integrate various DevOps practices.

With an extensive range of services such as virtual machines, containers, serverless computing, and managed databases, cloud computing empowers teams to expedite software delivery, enhance scalability, and reduce infrastructure expenses. It significantly augments the overall DevOps lifecycle by facilitating faster development and deployment cycles.

36. How can DevOps help improve customer satisfaction and drive business growth?

DevOps can increase customer satisfaction and drive business growth by providing better software faster. DevOps teams can offer features that satisfy customers’ expectations quickly by concentrating on collaboration, continuous improvement, and customer feedback. It can result in more loyal consumers and, ultimately, the company’s growth.

37. How can DevOps help organizations reduce the risk of software failures?

DevOps reduces the risk of software failures by promoting collaboration between teams, continuous testing, and monitoring. It also encourages a culture of learning from mistakes to address issues quickly and prevent them from happening again.

38. What are some common misconceptions about DevOps?

One common misconception about DevOps is that it is solely focused on tools and automation. In reality, it is a cultural and organizational shift that involves collaboration between teams and breaking down barriers.

Another misconception is that DevOps is only for startups or tech companies, when it can be beneficial for any organization looking to improve its software development and delivery processes.

People consider DevOps the responsibility of the IT department, but it requires buy-in and involvement from all levels of the organization.

39. How can DevOps affect culture change to improve the business?

DevOps can positively affect business culture by encouraging team collaboration, enhancing communication, and promoting a culture of continuous learning and improvement. This cultural change can result in delivering software products and services that are quicker and more effective, with greater customer satisfaction. Adopting DevOps practices enables organizations to develop a culture that values adaptability, creativity, and teamwork, ultimately improving business results.

40. Our team has some ideas and wants to turn those ideas into a software application. Now, as a manager, I am confused about whether I should follow the Agile work culture or DevOps. Can you tell me why I should follow DevOps over Agile?

According to the current market trend, instead of releasing big sets of features in an application, companies are launching small features for software with better product quality and quick customer feedback, for high customer satisfaction. To keep up with this, we have to:

- Increase the deployment frequency with utmost safety and reliability

- Lower the failure rate of new releases

- Shorten the bug resolution time

DevOps fulfils all these requirements for fast and reliable development and deployment of software. Companies like Amazon and Google have adopted DevOps and are launching thousands of code deployments per day. Agile, on the other hand, only focuses on software development.

41. How is DevOps different from Agile methodology?

DevOps and Agile are two methodologies that aim to improve software development processes. Agile focuses on delivering features iteratively and incrementally, while DevOps focuses on creating a collaborative culture between development and operations teams to achieve faster delivery and more reliable software.

DevOps also emphasizes the use of automation, continuous integration and delivery, and continuous feedback to improve the software development process. While Agile is primarily focused on the development phase, DevOps is focused on the entire software development lifecycle, from planning and coding to testing and deployment.

42. What is the role of configuration management in DevOps?

The major advantages of configuration management are given below:

- It enables us to manage the configurations on multiple systems.

- It allows us to standardize the configurations on all systems in a cluster.

- It helps us in the administration and management of multiple servers in the architecture.

43. What is the role of AWS in DevOps?

AWS in DevOps works as a cloud provider, and it has the following roles:

- Flexible services: AWS provides us with ready-to-use resources for implementation.

- Scaling purpose: We can deploy thousands of machines on AWS, depending on the requirement.

- Automation: AWS helps us automate tasks using various services.

- Security: Using its security options (IAM), we can secure our deployments and builds.

44. Can you list certain key performance indicators (KPIs) that are used to gauge the success of DevOps?

- Deployment Frequency: It is the number of times an application is deployed in a specific period.

- Mean Time to Recovery (MTTR): It is the average time taken to restore a failed system.

- Lead Time: It is the time taken from code commit to production release.

- Change Failure Rate: It is the percentage of changes that cause issues or failures.

- Time to Detect and Respond (TTDR): It is the average time taken to detect and respond to incidents.

45. What can be a preparatory approach for developing a project using the DevOps methodology?

Understanding the project’s objectives, specifications, and deadlines is essential to developing a project using the DevOps process. All stakeholders should be included in the planning process, and means for communication should be established.

Teams can also create a culture of collaboration, automate crucial procedures, and use a continuous improvement strategy. On the basis of the particular requirements and objectives of the project, it is also crucial to choose the right tools and technologies.

46. What are the various branching strategies used in version control systems?

Several branching strategies are used in version control systems, including trunk-based development, feature branching, release branching, and git-flow. Trunk-based development involves committing changes directly to the main branch, while feature branching involves creating a new branch for each new feature.

Release branching involves creating a separate branch for each release, and git-flow combines feature and release branches to create a more structured branching strategy. Each strategy has its advantages and disadvantages, and the choice of strategy depends on the specific needs of the project and the team.

47. What are the benefits of using version control?

- It helps improve the collaborative work culture: Here, team members are allowed to work freely on any file at any time. The version control system allows us to merge all changes into a common version.

- It keeps different versions of code files securely: All the previous versions and variants of code files are neatly packed up inside the version control system.

- It understands what happened: Every time we save a new version of our project, the version control system asks us to provide a short description of what was changed. More than that it allows us to see what changes were made in the file’s content, as well as who has made those changes.

- It keeps a backup: A distributed version control system like Git allows all team members to access the complete history of the project file so that in case there is a breakdown in the central server, they can use any of their teammate’s local Git repository.

48. What are some common integration challenges that can arise when using multiple DevOps tools?

When using multiple DevOps tools, integration challenges can arise, such as data incompatibility, conflicting configurations, and a lack of communication between tools. For example, a deployment tool may not be compatible with a monitoring tool, or a configuration management tool may have different settings from the testing tool.

Organizations can use integration frameworks, middleware, and APIs to facilitate communication between tools and ensure data consistency to overcome these challenges. It is also important to establish clear communication channels between teams using different tools to ensure effective collaboration.

DevOps Interview Questions for Source Code Management: Git

49. Can you tell me the advantages of using Git?

- Data redundancy and replication

- High availability

- Only one Git directory per repository

- Superior disk utilization and network performance

- Collaboration friendly

- Can be used for any sort of project

50. Are git fetch and git pull the same?

Both git pull and git fetch commands are used to sync the local repository to the remote repository in git.

| Git Pull |

Git Fetch |

| This command will pull commits from the remote repository and merge them with the local branch. |

Git fetch will pull commits from the remote repository but will not merge in the local branch. After doing a git fetch you have to do git merge to manually merge the new commits on the local branch. |

| Summarization: Git pull = git fetch + git pull |

Summarization: git Fetch = git fetch (only pull changes and not merge) |

51. How do you handle merge conflicts in Git?

In order to resolve merge conflicts in Git, we need to follow three steps:

- Understand what happened: It could be because of the same line being edited on the same file; it could be because of deleting some files, or it could also be because of files with the same file names. You can check everything by using ‘git status’.

- Mark and clean up the conflict: When we open the files using the merge tool, Git marks the conflicted area like this ‘<<<<< HEAD’ and ‘ >>>>> [other/branch/name]’.

- Perform the commit again and then merge the current branch with the master branch.

52. Can you tell me some advantages of the forking workflow over other Git workflows?

- The forking workflow is fundamentally different from other Git workflows. Instead of using a single server-side repository to act as the ‘central’ codebase, the forking workflow gives every developer their server-side repositories. This workflow is most often seen in public open-source projects. The main advantage is that contributions can be integrated without the need for everyone to push to a single central repository to maintain a clean project history. Developers can push to their server-side repositories, and only the one who maintains the project will push it to the official repository. As soon as developers are ready to publish their local commits, they will push their commits to their public repositories. Then, they will perform a pull request from the main repository which notifies the project manager that an update is ready to be integrated.

53. When do you use ‘git rebase’ instead of ‘git merge’?

Both ‘git rebases’ and ‘git merge’ commands are designed to integrate changes from one branch into another branch: just that they just do it in different ways.

When we perform rebase of a feature branch onto the master branch, we move the base of the feature branch to the master branch’s ending point.

By performing a merge, we take the contents of the feature branch and integrate them with the master branch. As a result, only the master branch is changed, but the feature branch history remains the same. Merging adds a new commit to your history.

Rebasing will create inconsistent repositories. For individuals, rebasing makes a lot of sense. Now, in order to see the history completely, the same way as it has happened, we should use merge. Merge preserves history, whereas rebase rewrites it.

54. I just made a bad git commit and made it public, and I need to revert the commit. Can you suggest how to do that?

Here we need to use the Git command:

git revert <name the commit you wish to revert>

This command can revert any command just by adding the commit ID.

55. Can you tell me how to squash the last n commits into a single commit? Is it even possible?

To squash the last n commits into a single commit, we can use:

git reset — soft HEAD~n &&<br>

git commit<br>

DevOps Interview Questions for Continuous Integration: Jenkins

56. I want to move or copy Jenkins from one server to another. Is it possible? If yes, how?

I would suggest copying the Jenkins jobs directory from the old server to the new one. We can just move a job from one installation of Jenkins to another by copying the corresponding job directory.

Or, we can also make a copy of an existing Jenkins job by making a clone of that job directory in a different name.

Another way is that we can rename an existing job by renaming the directory. But, if you change the name of a job, you will need to change any other job that tries to call the renamed job.

57. I have 40 jobs in the Jenkins dashboard and I need to build them all at once. Is it possible?

Yes, it is. With the help of a Jenkins plugin, we can build DevOps projects one after the other. If one parent job is carried out, then automatically other jobs are also run. We also have the option to use Jenkins Pipeline jobs for the same.

58. How will you secure Jenkins?

The way to secure Jenkins is as follows:

- Ensure that global security is on

- Check whether Jenkins is integrated with the company’s user directory with an appropriate plugin

- Make sure that the Project matrix is enabled to fine-tune access

- Automate the process of setting rights or privileges in Jenkins with a custom version-controlled script

- Limit physical access to Jenkins data or folders

- Periodically run security audits

59. Can you please tell me how to create a backup and copy files in Jenkins?

To create a backup, all we need to do is periodically back up our JENKINS_HOME directory. This contains all of the build configurations of our job, our slave node configurations, and our build history. To create a backup of our Jenkins setup, just copy this directory. We can also copy a job directory to clone or replicate a job or rename the directory.

60. What are Jenkins Pipeline and CI/CD Pipeline?

Jenkins Pipeline can be defined as a suite of plugins supporting both the implementation and integration of Jenkins’ continuous delivery pipeline.

Continuous integration or continuous delivery pipeline consists of build, deploy, test, and release. The pipeline feature is very time-saving. In other words, a pipeline is a group of build jobs that are chained and integrated in a sequence.

DevOps Interview Questions for Continuous Testing: Selenium

61. How to launch a browser using WebDriver?

For Firefox:

WebDriver driver = new FirefoxDriver();

For Chrome:

<br>

WebDriver driver = new ChromeDriver();

For Internet Explorer (IE):

WebDriver driver = new InternetExplorerDriver();<br>

62. Explain the different Selenium components.

The following are the different components of Selenium:

- Selenium Integrated Development Environment (IDE) – The Selenium IDE consists of a simple framework and comes with a Firefox plug-in that can be easily installed. This Selenium component should be used for prototyping.

- Selenium Remote Control (RC) – It is a testing framework for developers and QA that supports coding in any programming language like Java, PHP, C#, Perl, etc. This helps automate the UI testing process of web applications against any HTTP website.

- Selenium WebDriver – It has a better approach to automating the testing process of web-based applications and does not rely on JavaScript. This web framework allows cross-browser tests to be performed.

- Selenium Grid – This proxy server works with Selenium RC, and with the help of browsers, it is able to run parallel tests on different nodes or machines.

63. What are the testing types supported by Selenium?

Selenium supports regression testing and functional testing.

64. Are there any technical challenges with Selenium?

- It supports only web-based applications.

- It does not support the Bitmap comparison.

- No vendor support is available for Selenium compared to commercial tools like HP UFT.

- As there is no object repository concept, the maintainability of objects becomes very complex.

65. When should I use Selenium Grid?

Selenium Grid can be used to execute the same or different test scripts on multiple platforms and browsers, concurrently, in order to achieve distributed test execution. It allows testing under different environments, remarkably saving execution time.

66. Describe the difference between driver.close() and driver.quit().

The driver.close command closes the focused browser window. But, the driver.quit command calls the driver.dispose method which closes all browser windows and also ends the WebDriver session.

DevOps Chef Interview Questions

67. Why are SSL certificates used in Chef?

SSL certificates are used in Chef to establish secure and encrypted communication channels between Chef components and nodes. These certificates verify the authenticity of Chef servers and nodes, ensuring secure data transmission. By encrypting communication, SSL certificates protect sensitive information, such as authentication credentials and configuration data, from unauthorized access or tampering. This enhances the overall security of the Chef infrastructure and helps maintain the integrity and confidentiality of the data being exchanged.

68. How does Chef differ from other configuration management tools like Puppet and Ansible?

Chef differs from other configuration management tools like Puppet and Ansible in its approach to infrastructure automation. While Puppet and Ansible rely on a procedural approach, Chef uses a declarative approach, which means that users define the desired state of their infrastructure, and Chef ensures that it remains in that state. Additionally, Chef has a strong focus on testing and compliance, making it a popular choice in enterprise environments with strict security and compliance requirements.

69. What are the key components of a Chef deployment?

The key components of a Chef deployment include the Chef Server, which acts as the central hub for storing configuration data and Chef code; Chef Client, which runs on each node and applies the configurations defined by the Chef code; and the Chef Workstation, which is used by developers and administrators to write and test the Chef code before pushing it to the Chef Server for deployment. Other important components include cookbooks, recipes, and resources, which define the desired state of the infrastructure and the actions needed to achieve it.

DevOps Puppet Interview Questions

70. What are Puppet Manifests?

Every Puppet Node or Puppet Agent has its configuration details in Puppet Master, written in the native Puppet language. These details are written in a language that Puppet can understand and are termed Puppet Manifests. These manifests are composed of Puppet codes, and their filenames use the .pp extension.

For instance, we can write a manifest in Puppet Master that creates a file and installs Apache on all Puppet Agents or slaves that are connected to the Puppet Master.

71. How can I configure systems with Puppet?

In order to configure systems in a client or server architecture with Puppet, we need to use the Puppet Agent and the Puppet Master applications. In stand-alone architecture, we use the Puppet apply application.

72. What is a Puppet Module? How is it different from the Puppet Manifest?

A Puppet Module is nothing but a collection of manifests and data (e.g., facts, files, and templates). Puppet Modules have a specific directory structure. They are useful for organizing the Puppet code because, with Puppet Modules, we can split the Puppet code into multiple manifests. It is considered best practice to use Puppet Modules to organize almost all of your Puppet Manifests.

Puppet Modules are different from Puppet Manifests. Manifests are nothing but Puppet programs, composed of the Puppet code. File names of Puppet Manifests use the .pp extension.

73. Can you tell me what a Puppet codedir is?

It is the main directory for code and data in Puppet. It consists of environments (containing manifests and modules), a global modules directory for all the environments, and your Hiera data.

74. Where do you find codedir in Puppet?

It is found at one of the following locations:

Unix/Linux Systems:

/etc/puppetlabs/code

Windows:

<br>

%PROGRAMDATA%\PuppetLabs\code (usually, C:\ProgramData\PuppetLabs\code)

Non-root users:

~/.puppetlabs/etc/code

DevOps Ansible Interview Questions

75. How does Ansible work?

Ansible is an open-source automation tool which is categorized into two types of servers:

- Controlling machines

- Nodes

Ansible will be installed on the controlling machine and using that, machine nodes are managed with the help of SSH. Nodes’ locations are specified by inventories in that controlling machine.

Since Ansible is an agentless tool, it doesn’t require any mandatory installations on remote nodes. So, there is no need for background programs to be executed while it is managing any nodes.

Ansible can handle a lot of nodes from a single system over an SSH connection with the help of Ansible Playbooks. Playbooks are capable of performing multiple tasks, and they are in the YAML file format.

76. Sometimes, we use ad-hoc commands instead of Playbooks in Ansible. Can you tell me what’s the difference between Ansible Playbook and an ad-hoc command? Also, cite when to use them.

Ad-hoc commands are used to do something quickly, and they are mostly for one-time use. Whereas the Ansible Playbook is used to perform repeated actions. There are scenarios where we want to use ad-hoc commands to perform a non-repetitive activity.

77. Why should I use Ansible?

Ansible can help in:

- Configuration Management

- Application Deployment

- Task Automation

78. What are handlers in Ansible?

Handlers in Ansible are just like regular tasks inside an Ansible Playbook but they are only run if the task contains a ‘notify’ directive. Handlers are triggered when it is called by another task.

79. Have you heard about Ansible Galaxy? What does it do?

Yes, I have. Ansible Galaxy refers to the ‘Galaxy website’ by Ansible, where users share Ansible roles. It is used to install, create, and manage Ansible roles.

80. What are the benefits of Ansible?

Ansible is an open-source configuration management tool that helps us in the following:

- Automating tasks

- Managing configurations

- Deploying applications

- Efficiency

81. What are the prerequisites to install Ansible 2.8 on Linux?

To install Ansible 2.8 on Linux, Security-Enhanced Linux (SELinux) has to be enabled and Python 3 has to be installed on remote nodes.

DevOps Scenario-Based Interview Questions

82. Can you write the syntax for building a docker image?

To build a docker image, we use the following command:

<br>

docker build –f -t image_name:version<br>

83. How can Docker containers be shared with different nodes?

Docker containers can be shared on different nodes with the help of the Docker Swarm. IT developers and administrators use this tool for the creation and management of a cluster of swarm nodes within the Docker platform. A swarm consists of a worker node and a manager node.

84. What are the advantages of Docker over virtual machines?

Below are the differences in multiple criteria that show why Docker has advantages over virtual machines.

- Memory Space – In terms of memory, Docker occupies less space than a virtual machine.

- Boot-up Time – Docker has a shorter boot-up time than a virtual machine.

- Performance – Docker containers show better performance as they are hosted in a single Docker engine, whereas performance is unstable if multiple virtual machines are run.

- Scaling – Docker is easy to scale up compared to virtual machines.

- Efficiency – The efficiency of docker is higher, which is an advantage over virtual machines.

- Portability – Docker doesn’t have the same cross-platform compatibility issues with porting as virtual machines do.

- Space Allocation – Data volumes can be shared and used repeatedly across multiple containers in Docker, unlike virtual machines that cannot share data volumes.

85. What is the concept of sudo in Linux?

Sudo is a program for Unix/Linux-based systems that provides the ability to allow specific users to use specific system commands at the system’s root level. It is an abbreviation of ‘superuser do’, where ‘super user’ means the ‘root user’.

86. Can you tell me the purpose of SSH?

SSH is nothing but a secure shell that allows users to log in with a secure and encrypted mechanism into remote computers. It is used for encrypted communications between two hosts on an unsafe network. It supports tunneling, forwarding TCP, and also transferring files.

87. What is NRPE in Nagios?

NRPE stands for ‘Nagios Remote Plugin Executor’. As the name suggests, it allows you to execute Nagios plugins remotely on other Linux or Unix machines.

Nagios

It can help monitor remote machine performance metrics such as disk usage, CPU load, etc. It can communicate with some of the Windows agent add-ons. We can execute scripts and check metrics on remote Windows machines as well.

88. Can you tell me why I should use Nagios?

- To plan for infrastructure upgrades before outdated systems fail

- To respond to issues quickly

- To fix problems automatically when detected

- To coordinate with the responses from the technical team

- To ensure that the organization’s service-level agreements with the clients are being met

- To make sure that the IT infrastructure outages have only a minimal effect on the organization’s net income

- To monitor the entire infrastructure and business processes

89. What is Nagios Log Server?

Nagios Log Server simplifies the process of searching the log data. Nagios Log Server is the best choice for performing tasks such as setting up alerts, notifying when potential threats arise, simply querying the log data, and quickly auditing any system. With Nagios Log Server, we can get all of our log data in one location.

90. What is a namespace in Kubernetes?

Namespaces are a way to divide cluster resources between multiple users in Kubernetes. In other words, it is useful when multiple teams or users are using the same cluster which can lead to potential name collision.

91. What is kubectl?

By definition, kubectl is a command-line interface for running commands against Kubernetes clusters. Here, ‘ctl’ stands for ‘control’. This ‘kubectl’ command-line interface can be used to deploy applications, inspect and manage cluster resources, and view logs.

92. How does Kubernetes help in container orchestration and deployment in a DevOps environment?

Kubernetes helps in container orchestration and deployment in a DevOps environment by providing a platform for managing, scaling, and automating the deployment of containerized applications. It allows developers to deploy and manage applications consistently across different environments and infrastructures, ensuring they are reliable, scalable, and resilient.

Kubernetes automates many tasks related to the deployment and scaling of containers, such as load balancing, scaling, and self-healing, which helps to reduce manual effort and errors and ensure that applications are always available and performing.

93. What are some common challenges in managing Kubernetes clusters in a DevOps environment, and how can they be addressed?

In a DevOps environment, complicated configuration management, monitoring, and troubleshooting, as well as maintaining security and compliance, are common problems in operating Kubernetes clusters.

To address these problems, several strategies can be implemented. Firstly, adopting best practices like infrastructure-as-code and declarative configuration for cluster management can streamline operations. Secondly, automating tasks through CI/CD pipelines can save time and effort. Furthermore, implementing monitoring and logging solutions enables better visibility into cluster performance and security, aiding in problem-solving efforts.

94. Which file is used to define dependency in Maven?

In Maven, we define all dependencies inside pom.xml so that all the dependencies will be downloaded and can be used within the project.

95. What are the benefits of using Maven in a DevOps environment?

Maven provides several benefits for DevOps teams, including centralized management of project dependencies, simplified build configuration using a declarative approach, and automated build and deployment processes through integration with CI/CD pipelines.

Maven also supports modular project structures, allowing teams to develop and test individual components separately, and provides consistent and reproducible builds across development, testing, and production environments. These benefits enable faster and more efficient delivery of software applications in a DevOps environment.

96. Your company plans to move some applications to the cloud. How would you approach the migration plan while keeping security, compliance, and cost optimization in mind?

Let’s imagine our company has a few internal applications that we want to move to the public cloud – like AWS or Azure. How should we approach this?

First – I would make a plan looking at things like:

- Security

– We need to make sure our data and apps are secure when they move to the cloud. Adding firewall rules, network security groups, role-based access etc. Making sure only authorized people can access them.

- Compliance

– Based on our industry, there may be rules and policies we need to follow even in the cloud – like HIPAA healthcare policies or PCI data standards. We need to check what compliance needs we have and implement those controls in the cloud.

- Cost

– Clouds provide different instance types, reserved instances etc. So we should optimize and right size our resources to save costs. Only paying for what we use. Also, think about auto-scaling resources up and down based on usage and demand.

The migration plan would cover setting up the right cloud accounts and access controls, configuring networks and firewalls, and deploying servers and resources as needed while making sure they comply with all our policies. Testing things out first with non-production data. And figuring out how to best monitor, scale and optimize all these applications over time as needed.

97. A new feature released to production starts causing increased CPU load, latency issues and some failures. The team is asking you why. How would you approach debugging this issue? What techniques and tools would you use?

Let’s say our app has a new feature that started causing problems – things are running slow, some requests are failing, and our servers are working harder. The developers want to know why.

Some ways I’d start investigating as a DevOps engineer:

- Look at metrics and monitoring – Many DevOps tools can show metrics for things like CPU usage, memory, and network traffic. I’d check graphs around the time issues started happening. If the CPU spiked, that’s a clue.

- Review logs – Application and web server logs often record errors or warnings about issues. Search logs from that timeframe for any common errors or repeating patterns.

- Trace requests – Tools like distributed tracing can follow a specific request through microservices. This can help pinpoint high response times and failures to a specific service.

- Compare configurations – I’d check if any infrastructure or application changes were made right before issues started – new cloud settings, firewall rules, dependency versions etc. Roll back changes to test.

- Performance test – Simulate user traffic against different app versions to reproduce issues in a test environment. Helps narrow down the root cause.

The goal is to find patterns and reduce variables until the specific line of code, resource limit or configuration causing problems is uncovered. Using monitoring, logs, system data and tests to incrementally eliminate possibilities.

98. Developers complain applications have become very slow after moving to Kubernetes and containers. What strategies would you use for troubleshooting performance issues in a container environment?

Let’s imagine our developers just moved their applications to Kubernetes and docker containers. Everything used to run pretty fast, but now it has suddenly become really slow! Developers are complaining to us DevOps engineers about this. How can we figure out why?

First, I would check some basics on the Kubernetes nodes:

- Are CPU/memory resources being overloaded on nodes? Using too many resources could slow things down.

- Is network traffic between nodes and pods unusually high? That could indicate performance issues.

Then I’d look at the applications running:

- Are the container images much larger than before? Bigger images mean slower start-up

- Are there errors or throttling happening at the app level inside containers? App logs and metrics would provide clues.

Next is checking how Kubernetes manages the application:

- Are there issues with how Kubernetes is scheduling pods on nodes? We need to make sure the spreading is even.

- Is Kubernetes auto-scaling not working quickly enough during traffic spikes? Causing temporary resource bottlenecks.

There are also tools that can help profile Kubernetes as it manages containerized apps to check for performance bottlenecks.

Conclusion

We hope these DevOps course online interview questions will help you prepare for your upcoming interviews. If you are looking to learn DevOps Training in a systematic manner with expert guidance and support then you can check our DevOps course.

In case you’re looking to start a career or if you are already in the domain and want to make your skillet future-proof along the way, learn some new skills and tricks from our Advanced Certification in DevOps & Cloud Computing course or DevOps Certification Course Training and get certified today.