Jenkins Tutorial Overview

In this Jenkins tutorial, we will try to cover the end-to-end concepts related to Jenkins. Here, we will understand one of the most distinctive attributes of Jenkins: Jenkins Pipeline. We will learn how to manage and handle it. Additionally, in this Jenkins tutorial, we will check out how to perform Jenkins installation and how to create, schedule, and run builds using Jenkins plugins.

This tutorial would give you a kickstart to your DevOps journey and make you proficient in setting up Jenkins and making use of it for continuous integration.

Watch this Jenkins video tutorial:

Table of Contents:

What is Jenkins?

Jenkins is an automation tool written in Java with built-in plugins for continuous integration tasks. It is used to continuously build and test projects making it easier to integrate the changing codes to it.

Jenkins allows for faster delivery of software by working with a large number of deployment and testing technologies. It also accelerates the development phase via the automation of tasks. It is primarily a server-based app and requires a web server like Tomcat.

Jenkins rose to fame because of its monitoring of repeated tasks. If a team is developing a project, then Jenkins will constantly check and evaluate the code thereby returning any possible error/failure early in the development phase.

Since you have encountered the term “Continuous Integration”, let’s explore more about it.h2

1. What is Continuous Integration?

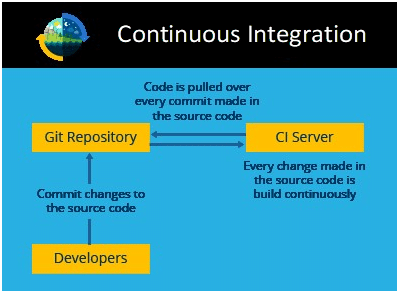

Continuous integration (CI) happens to be one of the most vital parts of DevOps. It is primarily used to integrate various stages of DevOps together. In other words, it is a coding practice that essentially enables the development team to make and implement small changes in the code and version control methods quite frequently.

Continuous integration is usually done in the form where all developers push the code onto a shared repository mostly multiple times a day. It is pretty fit for a project that should be coded and developed on different platforms with multiple tools. Currently, it has become important to have such a mechanism in place to integrate and validate the changes made to the code in a parallel way.

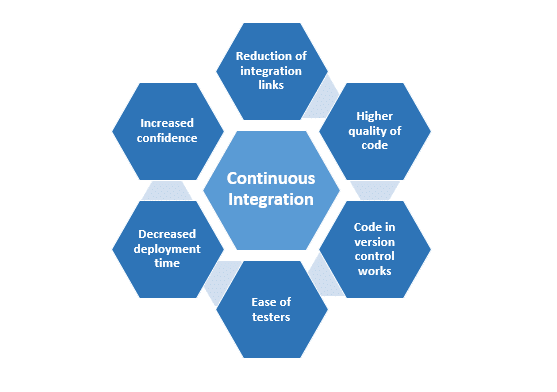

2. Why use Continuous Integration?

What exactly are the benefits of continuous integration? Why do we adopt this practice? To help answer these questions, here is a list of some of the advantages of CI.

- Reduction of integration links: All projects employ more than one person to develop and it greatly increases the risk of errors during integration. Depending on the complexity of the code, it is possible that a lot of changes would have to be made. Here comes CI to the rescue and helps alleviate the issues as it allows for regular integration.

- Higher quality of code: As the risks drastically reduce, a lot of the time and manpower can be diverted to creating a much more functionality-oriented code.

- Code in version control works: Committing something that breaks the build immediately triggers a notification thereby preventing anyone from pulling a broken code.

- Ease of testers: Retaining the different versions and builds of the code eases the work of QAs to understand, locate, and trace bugs efficiently.

- Decreased deployment time: Automating the process of deployment eases and frees up a lot of time and manpower.

- Increased confidence: The absence of a possible failure or breakdown gives developers peace of mind and thereby helps in delivering greater productivity and higher-quality products.

Let’s understand more about CI with the help of a use case.

3. A Sample Use Case in Jenkins

Consider an existing system that is meant to create a healthcare system that pulls the code from the shared repository at a certain time every day and builds it. This is something like CI except for the fact that it only builds once a day. This essentially leads to finding bugs only at one point of time in a day.

An alternate way to make this system CI-compliant, i.e. to enable the system to push the code onto the shared repository every time a change is made to it and build it.

This will make sure that all the developers, who work on the system and are making changes to the latest code, set, find, and resolve bugs as soon as they appear in the system. This also ensures an up-to-date software rollout especially, with critical systems such as healthcare.

Become a DevOps Pro: Transform Your Future

Hands-on training in CI/CD, automation, and cloud—start your DevOps journey today!

How does Jenkins work?

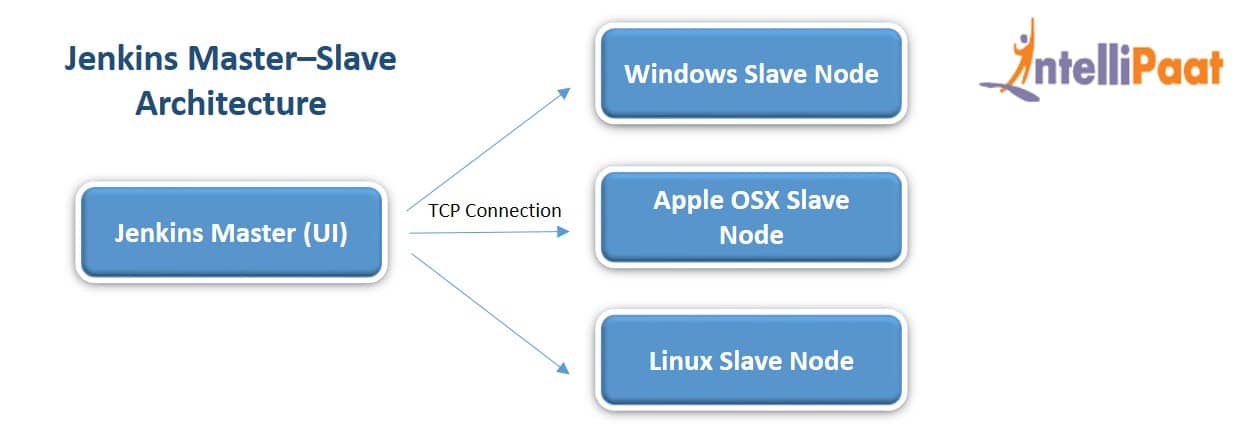

Standalone Jenkins instances can be an intensive disk and CPU resource-eating process. To avoid this, we can scale it by implementing slave nodes which essentially would help us offload a part of the master node’s responsibilities. A slave is just a device that is configured to act as an executor on behalf of the master. The master is the base installation of the Jenkins tool does the basic operations and serves the user interface while the slaves do the actual work.

In the below image, the Jenkins master is in charge of the UI and the slave nodes are of different OS types.

-

Jenkins Master–Slave Installation on AWS

So, how would one go about the installation of this master and slave system on AWS? Let’s see.

1. Creating a Master

Step 1: Create a new EC2 instance with Amazon Linux AMI 2016.03

Step 2: Update the system and install Jenkins on it with the following commands:

<br>

$yum update –y$ wget -O /etc/yum.repos.d/jenkins.repo https://pkg.jenkins.io/redhat/jenkins.repo<br>

$ rpm –import http://pkg.jenkins-ci.org/redhat/jenkins-ci.org.key<br>

Yum install –y Jenkins<br>

Note: The user must note that Jenkins installation can be performed either in the root or in the sudo access.

Step 3: Update /etc/sysconfig/Jenkins to authorize Jenkins to access the environment variables used by Jenkins plugins and remember to change the default time zone to the one we live in.

Step 4: Now that we have updated it, we need to only register and start it. Do so with the following command:

$chkconfig jenkins on$service jenkins start

Note: The above steps will also work for Jenkins installation on CentOS.

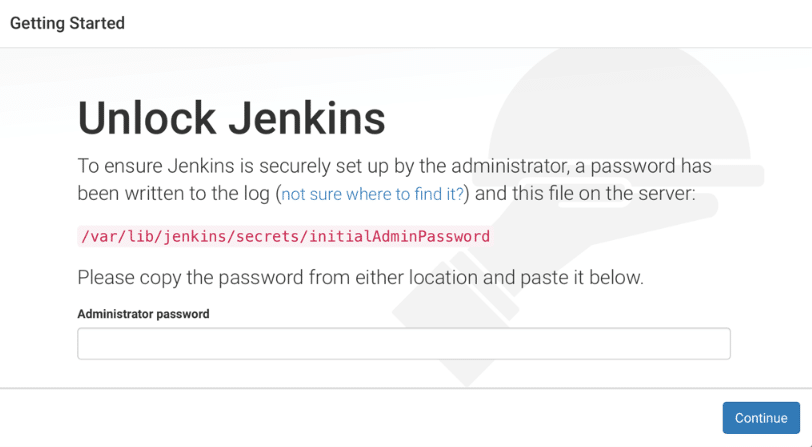

Step 5: Now Jenkins is enabled and is running. Proceed to the site http://SERVER IP :8080. When being redirected to the ‘Getting Started’ screen, retrieve the password and unlock Jenkins

unlock jenkins'

Now, we have an empty master running! So, let’s configure the slaves!

2. Configuring the Slaves in Jenkins

We will have to configure the slaves and update them in the master configuration.

Step 1: Create a new EC2 instance just as before with either the sudo or the root access

Step 2: Add the base dependencies like Java, Git, Docker, and so on with the following commands:

$ yum install -y docker git java-1.8.0-openjdk$ curl -L https://github.com/docker/compose/releases/download/1.6.2/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose$ chmod +x /usr/local/bin/docker-compose

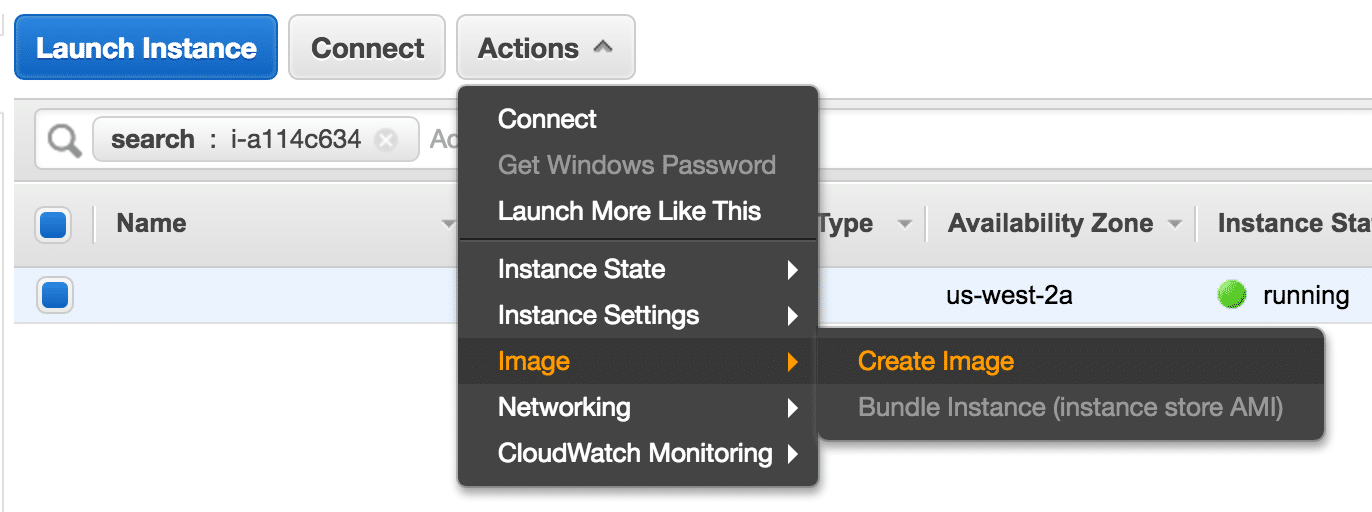

Step 3: Create the AMI on AWS; on the EC2 panel, go to Launch Instance, click on the slave that we recently configured and create a new image; once created, note down the AMI ID

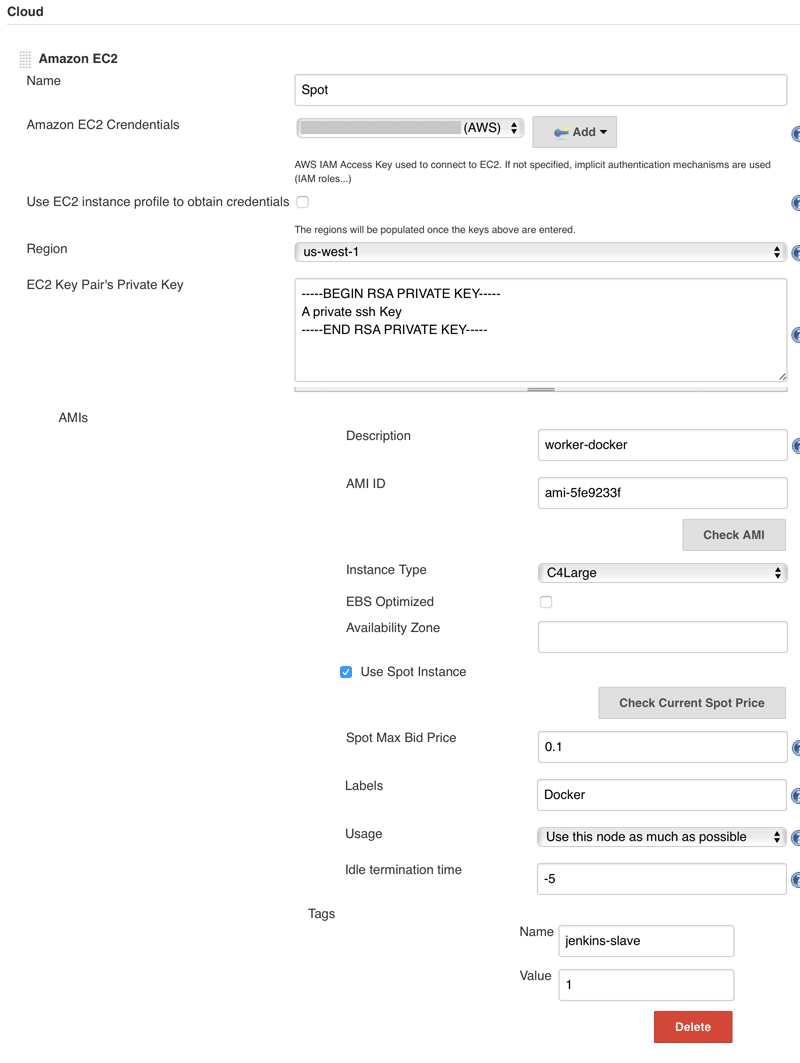

Step 4: Now, configure the master to use the AMI; to do so, follow the below steps:

- Create AWS credentials

- Once chosen, limit the scope to the system and complete the form

- For adding AWS as the cloud provider, follow:

Manage Jenkins > Configure the system > Add a new cloud > Amazon EC2 > Complete the form as needed

Now, we have a master and a slave configured successfully!

Refer to the screenshot below to see the sample setup screen. We may practice Jenkins installation with other specifications of our choice. This is only the default and the generalized format.

Now that we understand how Jenkins works, let us go ahead and understand how to install Jenkins plugins in this Jenkins tutorial.

Watch this interesting video tutorial on CI/CD Pipeline Using Jenkins:

Installing Jenkins Plugins

One of the core features of Jenkins is the integration of Jenkins plugins. They help add functionalities over the core to give us more powerful tools with regard to the project.

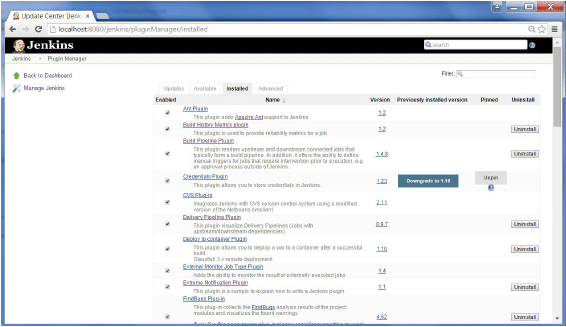

Now, let’s look into how we can list, add, modify, update, and remove these plugins from Jenkins.

- To list all the plugins supported by Jenkins, go to Jenkins plugins index.

- Once logged in, head over to the ‘Manage Jenkins’ tab on the left-hand side. This is where we would handle all the installed plugins, as well as add or remove new ones

- Under the Manage Plugins tab, we can search for a plugin or see all the available plugins

- By selecting a plugin and clicking on Install without restart, we can install the plugin and check its functionality sooner, rather than having to wait to restart Jenkins

- When we need to uninstall a plugin, head over to the Installed tab, select the plugin that we would like to remove and click on Uninstall. However, we must make sure to restart Jenkins for the changes to reflect.

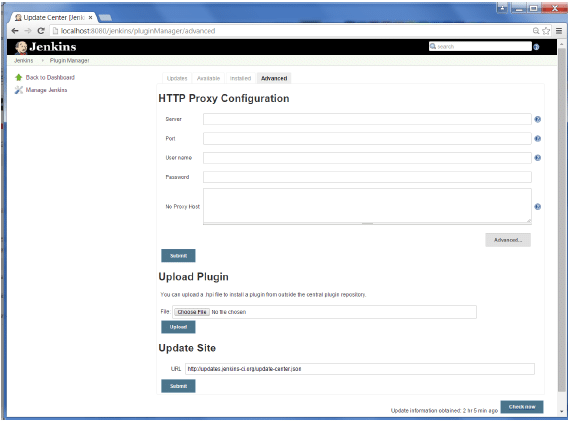

- In some cases, we would like to use an older version of a certain plugin. In such a situation, we have to download the needed plugin from the desired site and then upload it onto Jenkins manually.

- If we have created our own plugins, we have to upload them to the site and help further grow the community base.

Further, in this Jenkins tutorial, we will learn how to create Jenkins builds.

Creating Jenkins Builds

A build is often called when the source code is converted into a usable and runnable form. It allows compiling the code into an executable form. The process of building is typically handled by the build tool.

Builds are usually done when we reach a critical standpoint such as the integration of a feature or so on. As Jenkins is CI-based, we have a powerful feature where we can automate the build process to happen at a particular time or event. This is called ‘scheduled builds.’

1. Creating Scheduled Builds

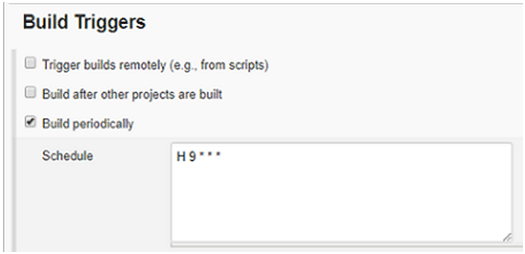

Now in this Jenkins tutorial, we will find out how we can schedule builds at certain times and triggers. To schedule a build, follow the below steps:

Step 1: In the ‘Build Triggers’ section, check the ‘Build periodically’ box

Step 2: In the text box, enter the scheduling parameters such as date, day, and time

The general syntax is MINUTE (0-59), HOUR (0-23), DAY (1-31), MONTH (1-12), DAY OF THE WEEK (0-7)

Now, let’s look at some examples of how to schedule builds.

- If we would want to start a build every day at 8:30 from Monday to Friday, then we would have to give the parameters like 30 08 * * 1-6

- To start building daily in the afternoon from 4 to 4:59 pm depending on the project hash, we would have to give the parameters like H 16 * * 1-5

- If we would like it to start at midnight, then we would have to give the parameters like @midnight or 59 23 * * 6

- To build every hour, we would have to give it as H * * * *

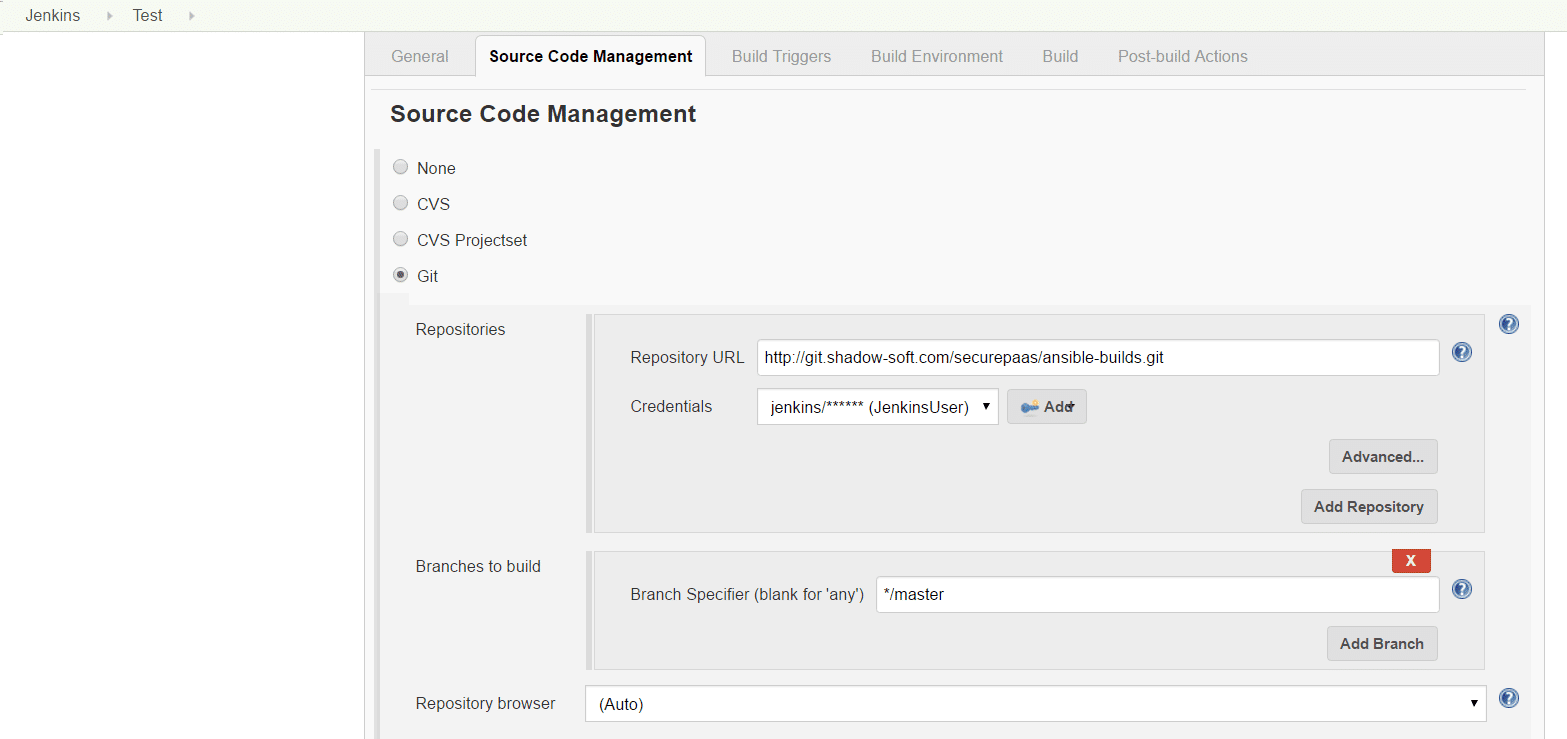

2. GIT Webhook

We have seen how to automate builds in Jenkins with respect to a particular time or date.

What if we could build every time we push the code in?

- To do exactly this, install ‘GitLab Hook Plugin’ just as we have seen in the sections above

- Start a new project if not already created, scroll down to ‘Source code management’ and add the URL of the Git repository, along with the required credentials

- Now, add the steps that we would want to be performed during the build. Go to the Add built setup and add a build step

Note: This step depends on what we want to do and on the environment. Check out the screenshot below to see the setup page.

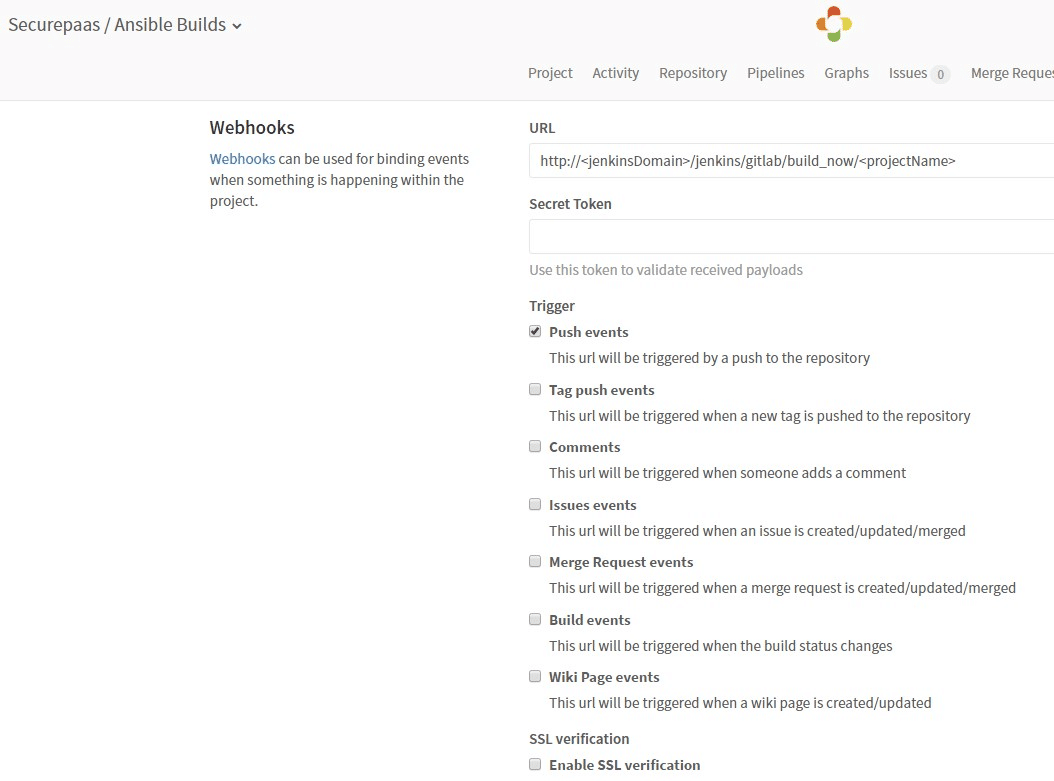

Now, we would have to add a webhook for our repository in GitLab.

- Navigate to the instance, select the cog icon, and choose the webhook

- Fill in the URL field with http://<jenkinsDomain>/jenkins /gitlab/build_now/<projectName>

- Now click on the Add webhook button at the end of the page

- Add the features that are required by checking the boxes as shown in the screenshot above, and that’s it! We’re all set to go!

Build Your DevOps Portfolio with Free Courses

Free DevOps Training to Boost Your Career

Let us move forward in this Jenkins tutorial to understand Jenkins pipelines, probably the most important feature of Jenkins.

Jenkins Pipelines

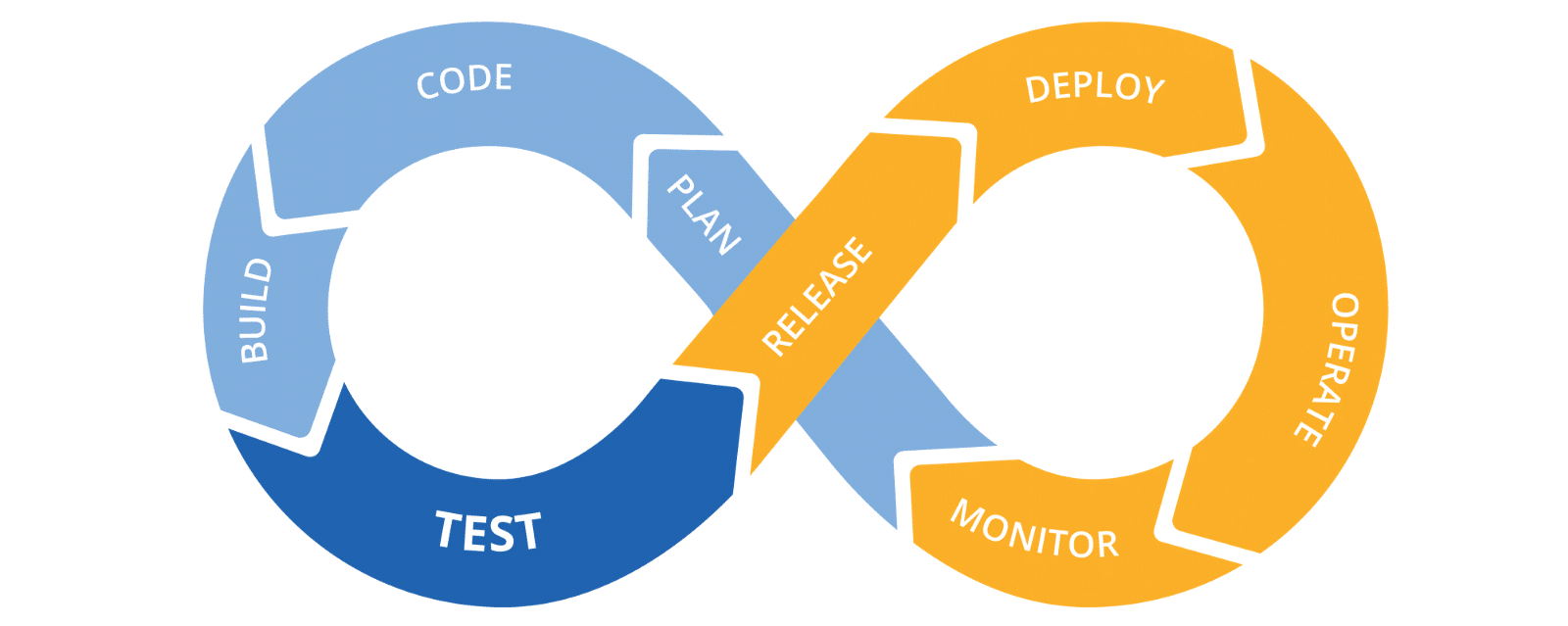

What is CI/CD?

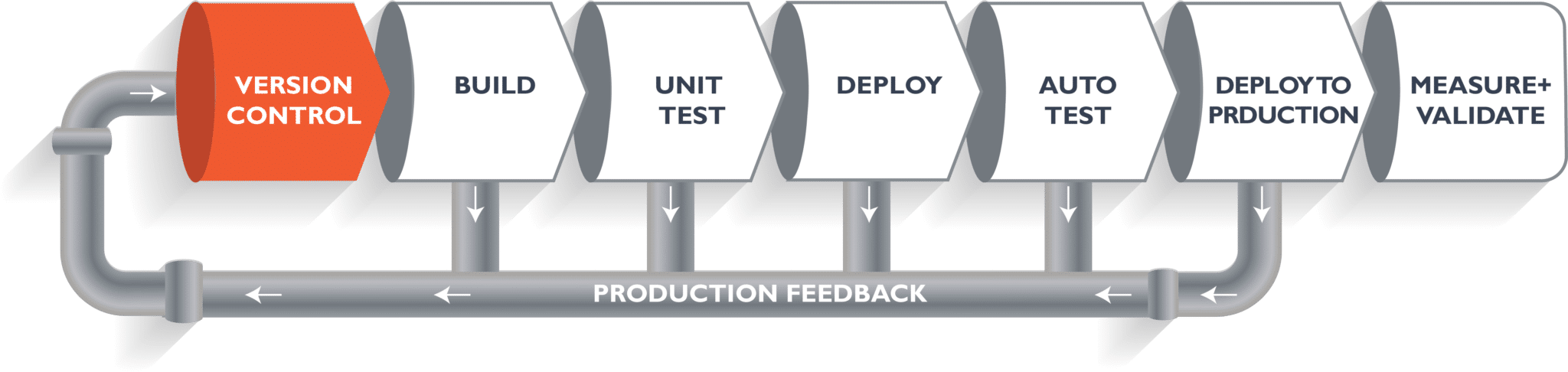

What exactly is ‘continuous integration and continuous delivery (CI/CD)?’ Well, to put it plainly, it is a coding practice that drives the development team to make small changes and version control checks much more than often.

The primary goal of CI, as mentioned earlier in this tutorial, is to have an automated way to build and test applications. Higher software quality is expected here since it leads to frequent changes and better collaboration in the team. Continuous delivery, on the other hand, is an extension of CI in a way that it pitches into the process where CI stops.

Continuous delivery (CD) lets us automate all releases to the infrastructure defined. It basically ensures an automated way to push the code changes. It alternatively performs the required service calls to servers, databases, and others that may require to be restarted.

What is the CI/CD Jenkins Pipeline?

Another term we come across most often when talking about CI/CD is the ‘CI/CD Jenkins Pipeline.’ What exactly is it? What does it do?

The tasks and the jobs handling the source code transformation into a releasable form are typically strung together into sort of a pipeline, where the completion of a task with a successful status would start off another automated process that is next in the sequence. They are also referred to as ‘CD Pipeline,’ ‘Deployment Pipeline,’ or ‘Software Dev Pipeline.’

A supervisory application takes charge of managing, running, monitoring, and reporting different parts of the pipeline as they keep getting executed. Now, let’s look into how this actually works.

The real-world implementation can differ based on the type of project we have at our hands. Although the overall workflow is the same, it basically differs based on the facts and parameters of source tracking, building, gathering metrics, and testing.

Check out this Jenkins Pipeline video tutorial:

The supervisory application mentioned above would manage the processes that have their own jobs. A job is created to perform functions such as testing, building, and so on as we have stated before; the primary point is that all the jobs are automated, repeatable, and efficient. Once a job has returned a successful status, the supervisory app triggers the next job or task in the sequence. Thanks to automation, errors that occur in each step can be easily identified and fixed at the earliest. This is most often referred to as ‘fail fast.’

‘Fail fast’ is the idea of finding errors as soon as possible and notifying the respective teams. An advantage of this feature is that the software looks at the process history and assigns the error to the respective teams so that they can handle and resolve it.

Creating a CI/CD Jenkins Pipeline

Now that we know what Jenkins pipeline is, let’s dig deeper to find out how to create a Jenkins CI/CD pipeline from scratch.

Jenkins is a powerful tool that we can use to automate the entire DevOps process with the help of various interfaces and tools. The GIT repository is typically where the Dev team commits the code.

Jenkins takes over with the help of a frontend tool to help define the job or task. Jenkins then pulls the code and moves it to the commit phase. Next comes the build phase where the code is compiled. Finally, the code moves on to the staging area with the help of Docker to deploy it.

Now, we’ll get into creating the Jenkins pipeline with the help of Jenkins and Docker.

Step 1: Open the terminal in the VM and run the following:

systemctl start Jenkins<br>

systemctl enable Jenkins<br>

systemctl start Docker

Step 2: Open Jenkins on the specified port and click on the new item that will help us create a new job

Step 3: Select a ‘freestyle’ option and enter the item name of our choice

Step 4: Select ‘Source code management’ and provide the GIT repository. Then, click on Apply and save

Step 5: Go to the build and select the execute shell

Step 6: Now, provide the shell commands. It will generate a wat file. Now, the code is pulled up and installs the package, along with the dependencies, and compiles the application

Step 7: Run Steps 3–5 again and provide the shell commands. This will start integration and build in Docker

Step 8: Run Steps 3–5 once again with a different job name and provide the shell commands as before. Here, it will check the Docker container file and deploy it to the pre-defined port

Step 9: Now, to configure the jobs, click on Job1 and then on ‘Post-build actions’ and ‘Build other projects,’ respectively

Step 10: Provide the project name and then save

Step 11: Perform Step 9 again to configure Job2

Step 12: Now to create a pipeline view of the same, click on the plus (+) symbol select the build pipeline view, and then provide a view name

Step 13: Now select ‘Run,’ which will start the CI/CD process in the system

Step 14: After the build is complete, go to ‘localhost:8180/sample.text’ that will then run the application

Get 100% Hike!

Master Most in Demand Skills Now!

Conclusion

As we come to the end of this Jenkins tutorial, we can now recall all the major concepts related to Jenkins such as ‘What is Jenkins?’ and its role in the CI/CD space, how to set up Jenkins and create masters and slaves, the huge world of plugins that are available in Jenkins, and much more. It is suggested to try out installing newer plugins and also uploading them to the community to keep them alive.

Further in this Jenkins tutorial, we have learned how to create builds and how to schedule them as per our needs. The creation of CI/CD pipelines is one of the most important aspects of Jenkins that we have taken a look at. Now, we need to create our own jobs and schedule them with different parameters to get a more in-depth working knowledge of them.

Jenkins is one of the most valuable DevOps Tools out there at our disposal. Learning the ways and skills with Jenkins can be an invaluable asset. To learn more and get certified, head over to Intellipaat to find the best trainers and e-learning courses.

We hope this tutorial helps you gain knowledge of the DevOps course online. If you are looking to learn DevOps courses in a systematic manner with expert guidance and support then you can enrol to our DevOps Training.